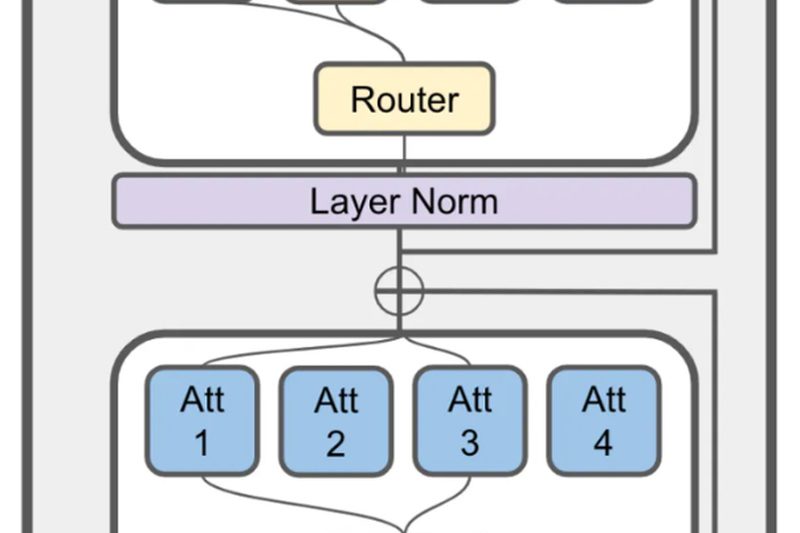

Building powerful language models used to be the exclusive domain of well-funded tech giants. But JetMoE is changing that narrative.…

Efficient Inference

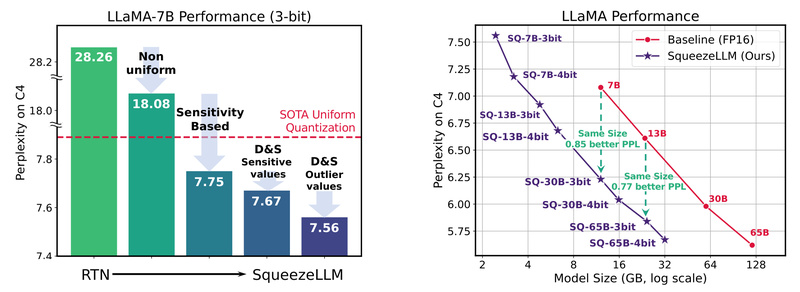

SqueezeLLM: Deploy High-Accuracy LLMs in Half the Memory Without Sacrificing Performance 704

Deploying large language models (LLMs) like LLaMA, Mistral, or Vicuna often demands multiple high-end GPUs, complex inference pipelines, and substantial…

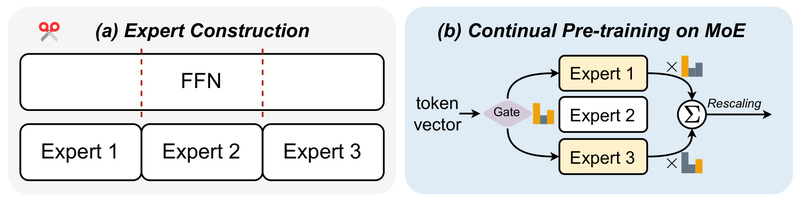

LLaMA-MoE: High-Performance Mixture-of-Experts LLM with Only 3.5B Active Parameters 994

If you’re a developer, researcher, or technical decision-maker working with large language models (LLMs), you’ve likely faced a tough trade-off:…

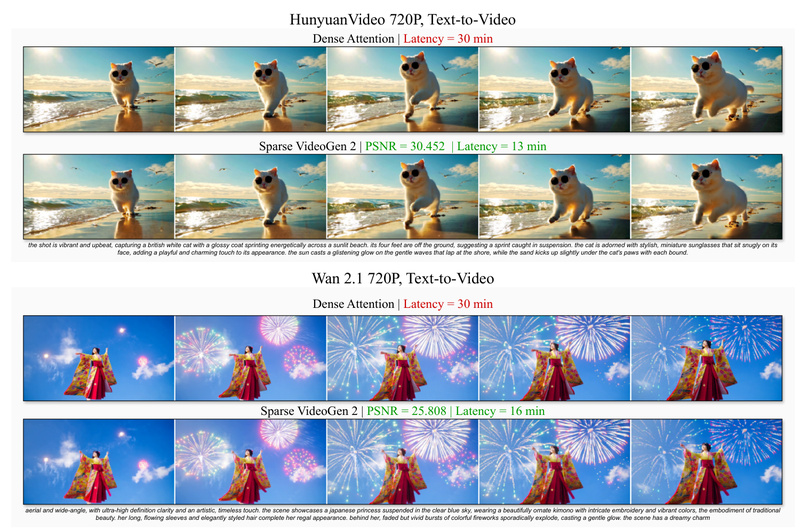

Sparse VideoGen2: Accelerate Video Diffusion Models 2.3x Without Retraining or Quality Loss 596

Video generation using diffusion transformers (DiTs) has reached remarkable visual fidelity—but at a steep computational cost. The quadratic complexity of…

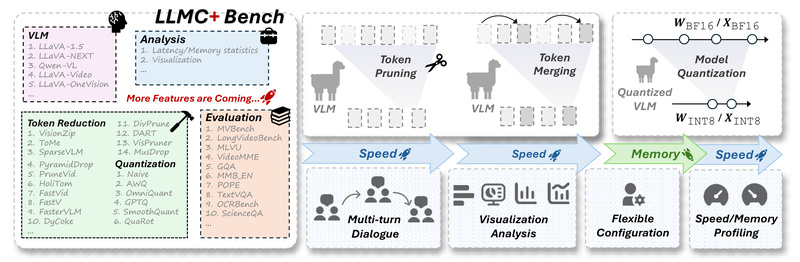

LLMC+: Plug-and-Play Compression for Vision-Language and Large Language Models Without Retraining 577

Deploying large vision-language models (VLMs) and large language models (LLMs) in real-world applications is often bottlenecked by their massive size,…

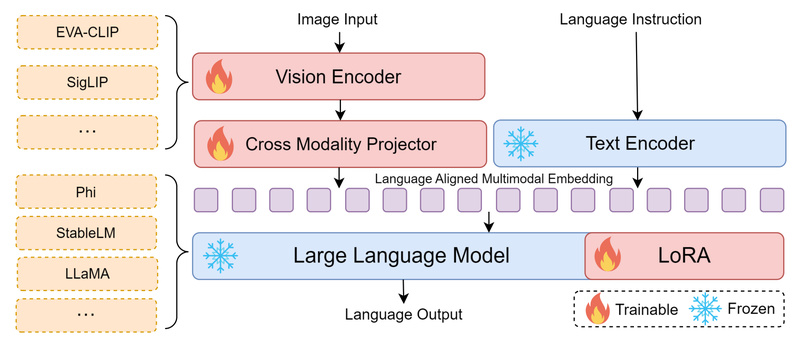

Bunny: High-Performance Multimodal AI Without the Heavy Compute Burden 1046

Multimodal Large Language Models (MLLMs) are transforming how machines understand and reason about visual content. Yet, their adoption remains out…

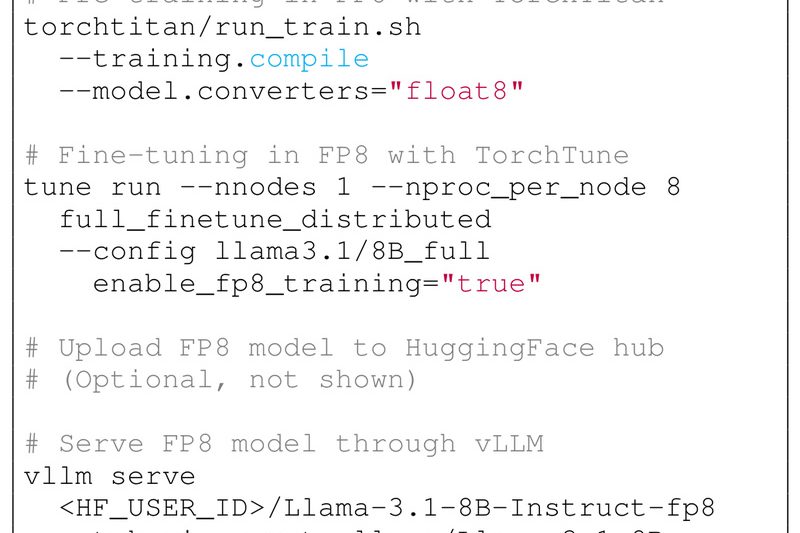

TorchAO: Unified PyTorch-Native Optimization for Faster Training and Efficient LLM Inference 2559

Deploying large AI models in production often involves a fragmented toolchain: one set of libraries for training, another for quantization,…