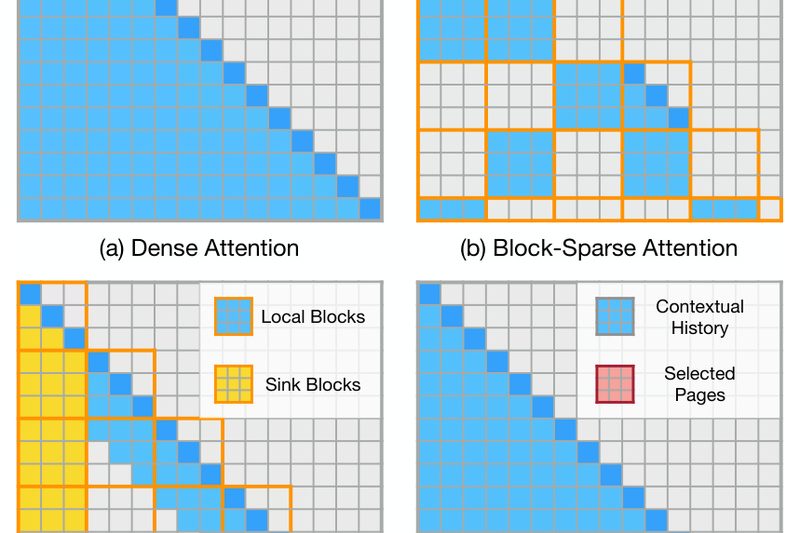

Deploying large language models (LLMs) to handle long documents, extensive chat histories, or detailed technical manuals remains a major bottleneck…

Efficient LLM Inference

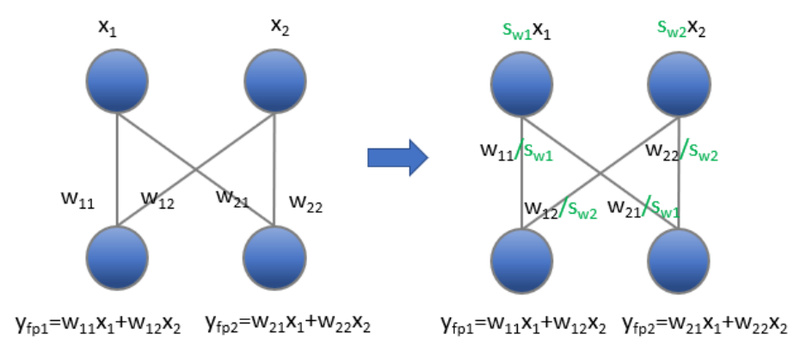

TEQ: Accurate 3- and 4-Bit LLM Quantization Without Inference Overhead 2544

Deploying large language models (LLMs) in production often runs into a hard trade-off: reduce model size and latency through quantization,…