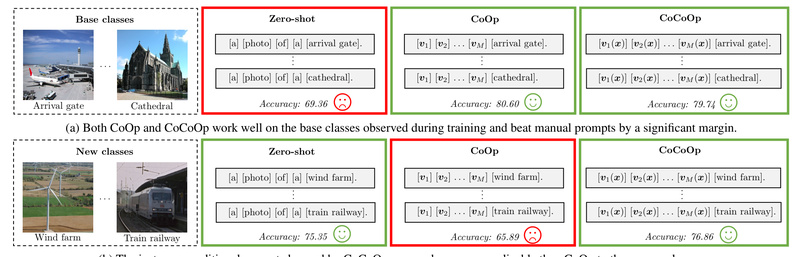

In the era of foundation models, CLIP (Contrastive Language–Image Pretraining) has revolutionized how we approach vision-language tasks—especially zero-shot image classification.…

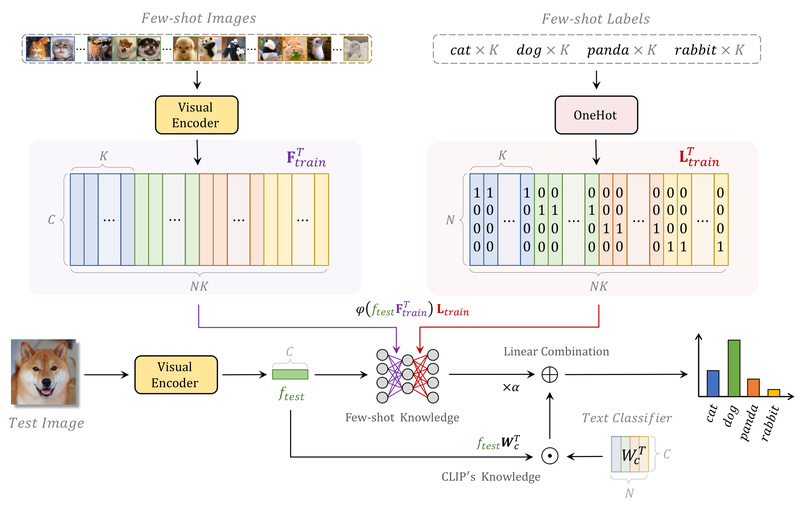

Few-shot Image Classification

CoOp: Adapt Vision-Language Models Like CLIP to Your Task with Just a Few Labels—No Full Fine-Tuning Needed 2134

Imagine you have access to a powerful pre-trained vision-language model like CLIP—capable of understanding both images and text—but you need…