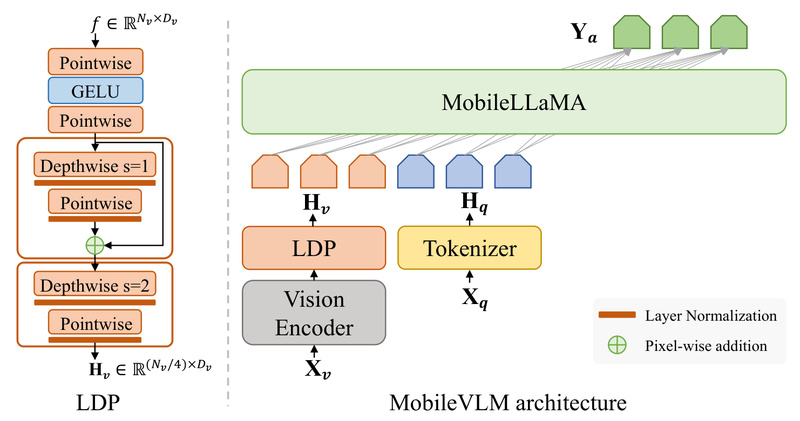

MobileVLM is a purpose-built vision-language model (VLM) engineered from the ground up for on-device deployment on smartphones and edge hardware.…

On-Device AI

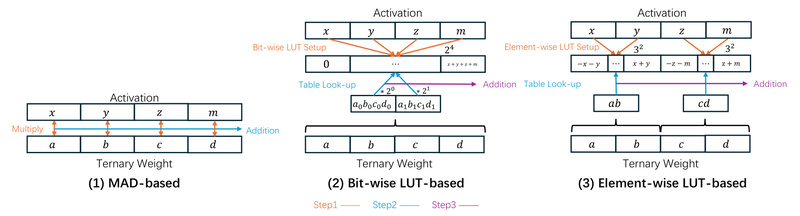

Bitnet.cpp: Run 1.58-Bit LLMs at the Edge with Lossless Speed and Efficiency 24456

Large language models (LLMs) are becoming increasingly central to real-world applications—but their computational demands remain a major barrier for edge…

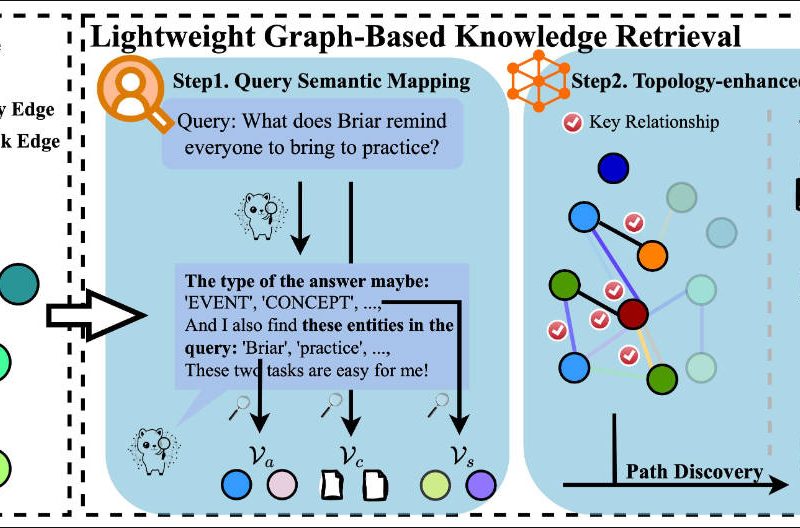

MiniRAG: Enable Small Language Models to Deliver Powerful RAG with Minimal Resources 1605

Retrieval-Augmented Generation (RAG) has become a cornerstone technique for grounding language models in factual knowledge. However, traditional RAG pipelines struggle…