Deploying large language models (LLMs) in real-world applications remains a major engineering challenge. While models like LLaMA-2, Falcon, and Mixtral…

Post-training Quantization

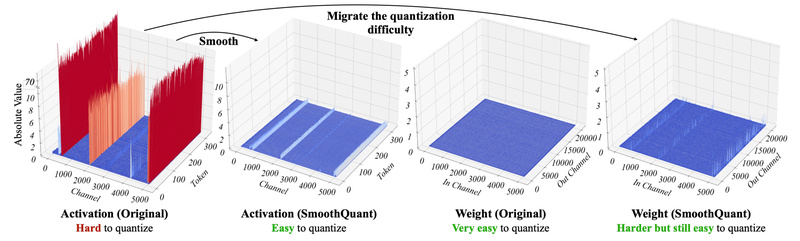

SmoothQuant: Accurate 8-Bit LLM Inference Without Retraining – Slash Memory and Boost Speed 1576

Deploying large language models (LLMs) in production is expensive—not just in dollars, but in compute and memory. While models like…