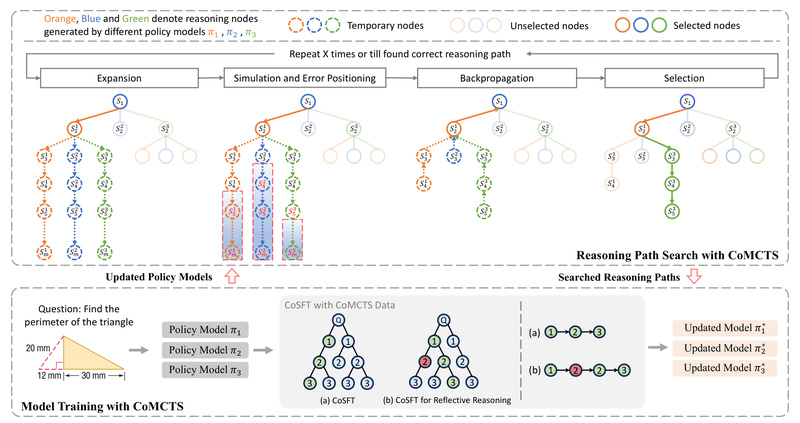

Traditional multimodal large language models (MLLMs) often produce answers without revealing how they got there—especially when dealing with complex questions…

Visual Question Answering

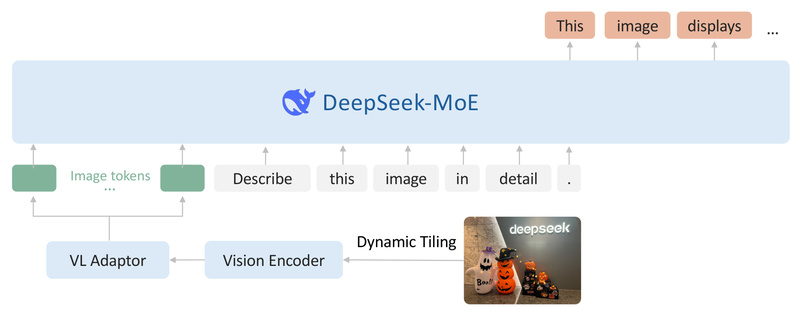

DeepSeek-VL2: High-Performance Vision-Language Understanding with Efficient Mixture-of-Experts Architecture 5072

DeepSeek-VL2 is an open-source, advanced vision-language model (VLM) built on a Mixture-of-Experts (MoE) architecture, engineered for robust multimodal understanding across…

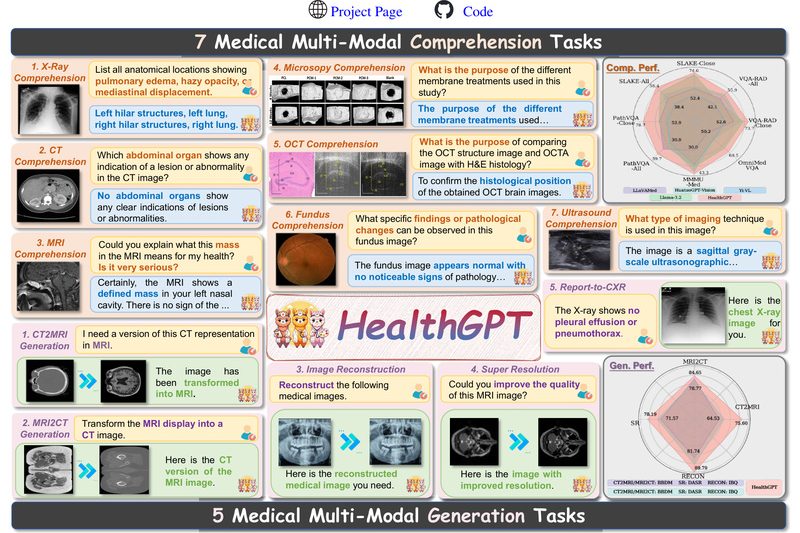

HealthGPT: Unified Medical Vision-Language Understanding and Generation in a Single Model 1567

HealthGPT is a cutting-edge Medical Large Vision-Language Model (Med-LVLM) designed to tackle a long-standing challenge in AI for healthcare: the…