If you’re building or scaling large language models (LLMs) and have access to NVIDIA GPU clusters, Megatron-LM—developed by NVIDIA—is one…

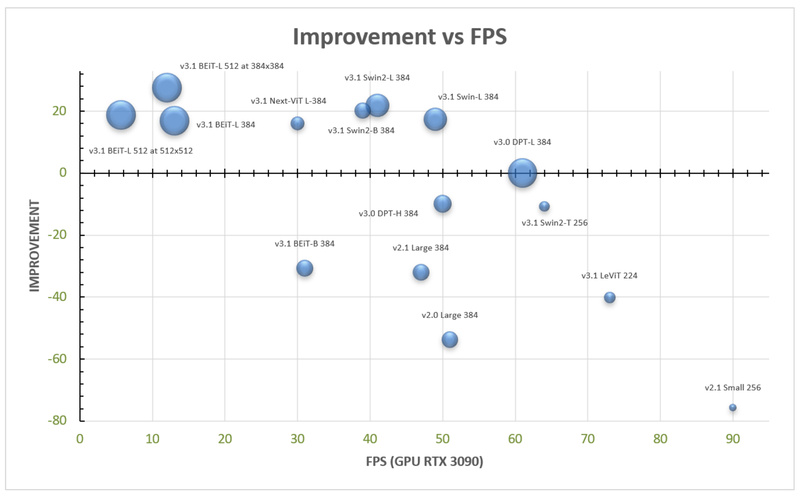

MiDaS: Robust Monocular Depth Estimation from a Single Image—No Special Hardware Required 5267

In today’s world of intelligent systems—from autonomous robots to immersive AR experiences—depth perception is essential. Yet most cameras only capture…

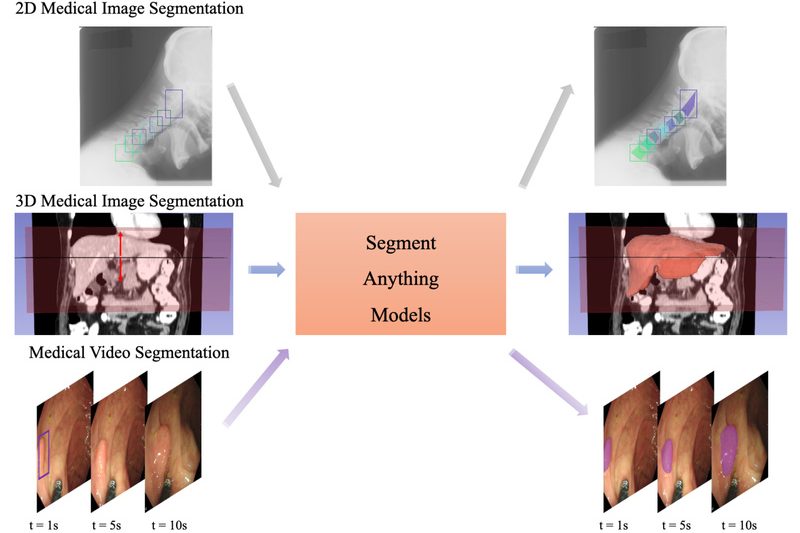

MedSAM: Accurate, Prompt-Based Medical Image Segmentation Out of the Box 3980

Medical image segmentation—the process of delineating anatomical structures or pathologies in scans like CT, MRI, or ultrasound—is foundational to diagnosis,…

3D-Speaker: High-Accuracy Speaker Verification and Diarization Made Accessible for Real-World Applications 2648

In the landscape of spoken language processing, accurately identifying who is speaking—across recordings, meetings, or voice-based interfaces—remains a critical yet…

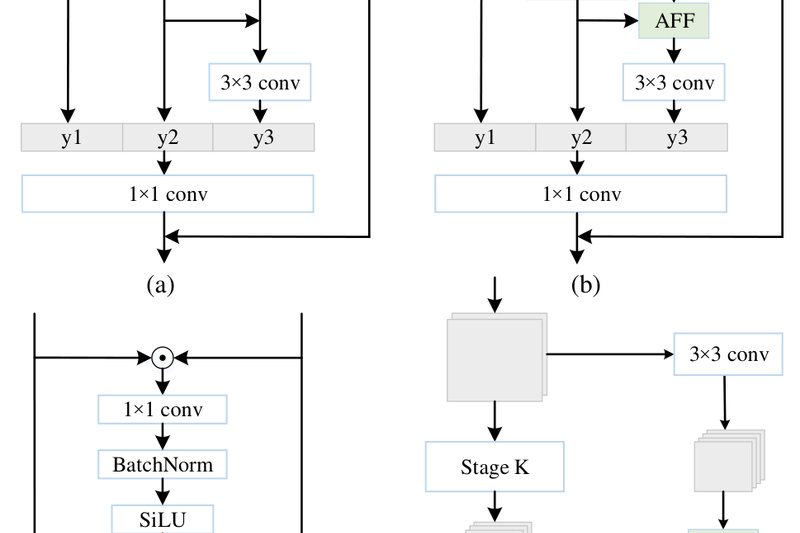

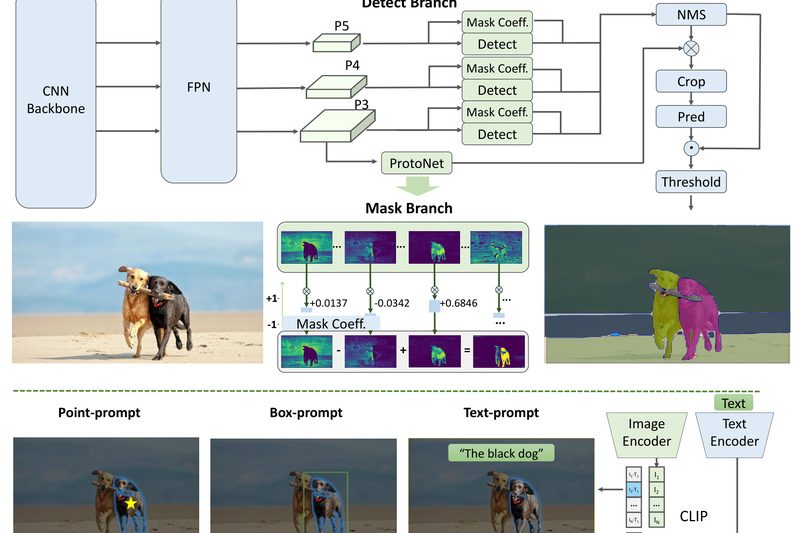

FastSAM: Real-Time Image Segmentation at 50x Speed Without Sacrificing Accuracy 8193

In today’s fast-paced computer vision landscape, high-quality image segmentation is no longer a luxury—it’s a necessity. Yet, despite the groundbreaking…

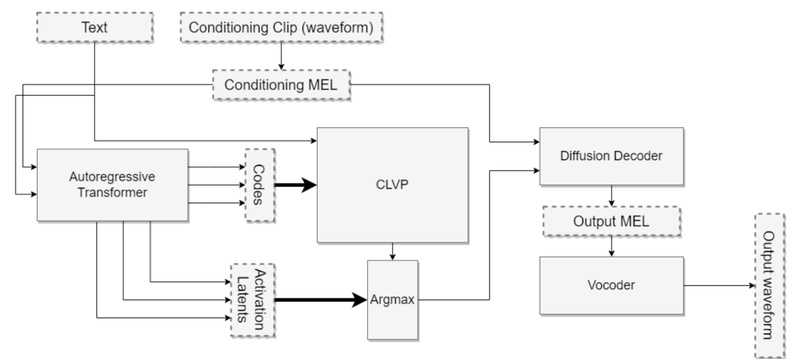

Tortoise-TTS: High-Quality, Multi-Voice Text-to-Speech with Realistic Prosody and Open-Source Flexibility 14737

Tortoise-TTS is an open-source text-to-speech (TTS) system designed for one core purpose: generating expressive, natural-sounding speech with strong multi-voice capabilities.…

InvSR: High-Quality Image Super-Resolution in 1–5 Steps Using Diffusion Inversion 1341

Image super-resolution (SR) remains a critical capability across computer vision applications—from upscaling smartphone photos to enhancing AI-generated content (AIGC). However,…

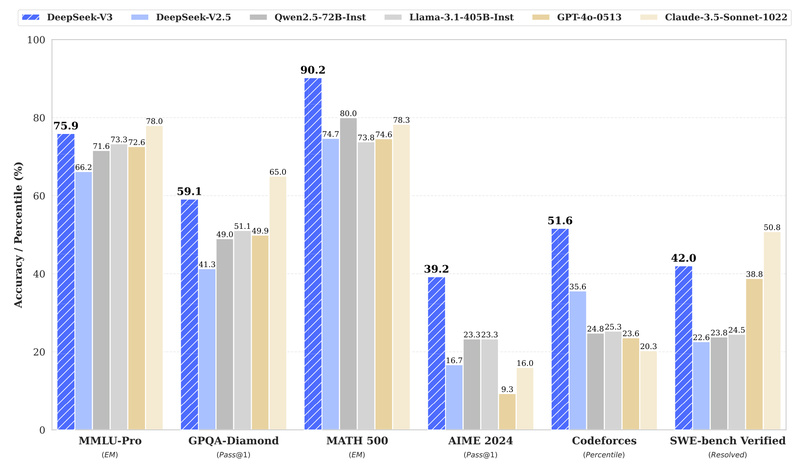

DeepSeek-V3: A High-Performance, Cost-Efficient MoE Language Model That Delivers Closed-Source Power with Open-Source Flexibility 100738

For technical decision-makers evaluating large language models (LLMs) for real-world applications, balancing raw capability, inference cost, training efficiency, and deployment…

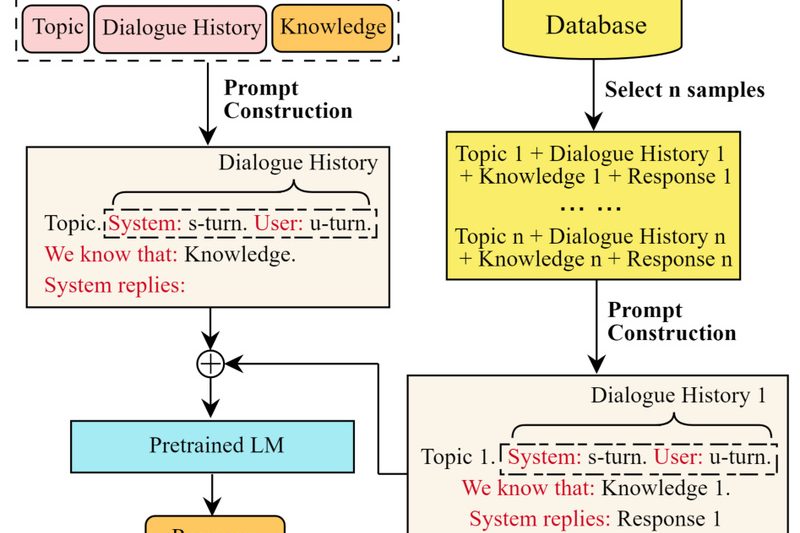

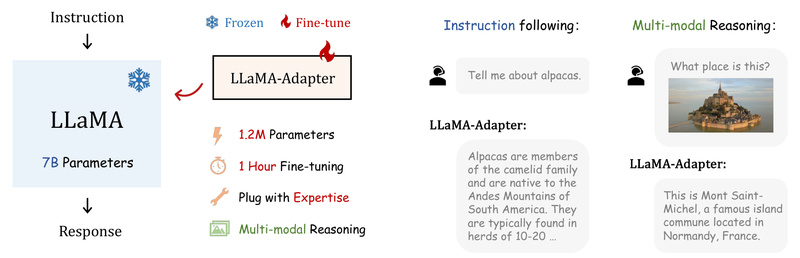

LLaMA-Adapter: Efficiently Transform LLaMA into Instruction-Following or Multimodal AI with Just 1.2M Parameters 5907

If you’re working on a project that requires a capable language model—but lack the GPU budget, time, or infrastructure for…

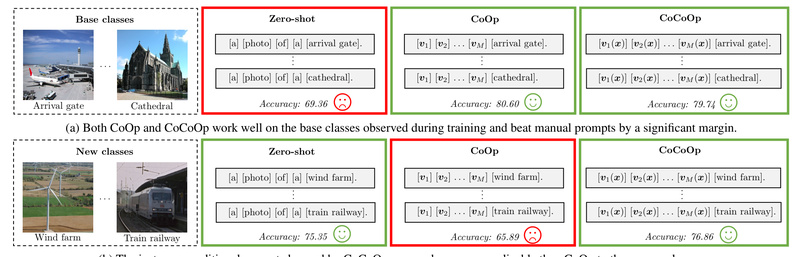

CoOp: Adapt Vision-Language Models Like CLIP to Your Task with Just a Few Labels—No Full Fine-Tuning Needed 2134

Imagine you have access to a powerful pre-trained vision-language model like CLIP—capable of understanding both images and text—but you need…