Traditional Retrieval-Augmented Generation (RAG) systems excel at answering questions using text-based documents—but they often stumble when faced with visually rich PDFs containing tables, charts, diagrams, or mixed layouts. These documents, common in scientific publications, technical manuals, financial reports, and government forms, demand joint understanding of both visual structure and textual semantics.

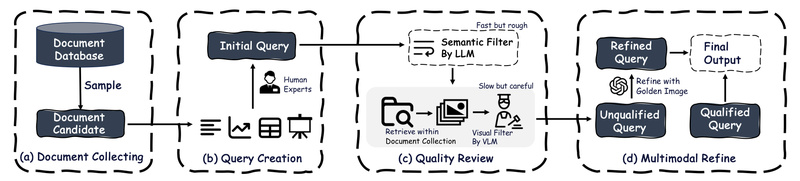

ViDoRAG—short for Visual Document Retrieval-Augmented Generation via Dynamic Iterative Reasoning Agents—is a next-generation RAG framework purpose-built for this challenge. It doesn’t just retrieve passages; it reasons iteratively across multimodal content using a team of specialized agents and a Gaussian Mixture Model (GMM)-based hybrid retrieval strategy that fuses visual and textual signals intelligently. On the newly introduced ViDoSeek benchmark, ViDoRAG outperforms prior methods by over 10%, setting a new state of the art for complex question answering over dense visual documents.

Why Traditional RAG Falls Short on Visual Documents

Most RAG pipelines treat documents as pure text. When applied to PDFs with figures, tables, or multi-column layouts, this approach loses critical context:

- Visual cues (e.g., chart axes, diagram labels) are ignored during retrieval.

- Spatial relationships between text and graphics vanish after naive OCR.

- Multi-hop reasoning across pages becomes unreliable due to fragmented or noisy retrieved snippets.

Even vision-language models (VLMs) struggle when used naively—they’re powerful at single-image QA but lack robust retrieval-aware reasoning over large document collections. ViDoRAG directly addresses these gaps with a co-designed retrieval and reasoning architecture.

Core Innovations That Power ViDoRAG

Hybrid Multi-Modal Retrieval with GMM Fusion

ViDoRAG introduces a dynamic hybrid retriever that combines:

- A vision-language embedding model (e.g., ColQwen) for visual-page understanding.

- A text embedding model (e.g., BAAI/bge-m3) for semantic keyword matching.

Instead of naively averaging or concatenating scores, ViDoRAG uses a Gaussian Mixture Model to dynamically weight visual vs. textual relevance based on query characteristics. This adaptability ensures high recall for both factoid queries (“What’s the value in Table 3?”) and reasoning-intensive ones (“Compare the trends in Figures 2 and 5”).

Iterative Multi-Agent Reasoning Workflow

Retrieval is just the start. ViDoRAG’s true edge lies in its actor-critic multi-agent system, which performs three coordinated steps:

- Exploration: The agent identifies candidate pages and extracts relevant multimodal snippets.

- Summarization: It condenses evidence into a coherent intermediate representation.

- Reflection: A critic evaluates consistency and gaps, triggering re-retrieval if needed.

This loop mimics expert human behavior—iteratively refining hypotheses based on document evidence—and enables test-time scaling: more reasoning steps often lead to higher accuracy on complex queries.

Practical Use Cases for Technical Decision-Makers

ViDoRAG shines in domains where documents are information-dense, multimodal, and require cross-page synthesis:

- Scientific Research: Answering questions about experimental setups or results across figures and methods sections in PDF papers.

- Financial & Legal Analysis: Extracting obligations, figures, or clauses from contracts or annual reports where tables and footnotes carry critical meaning.

- Technical Documentation: Troubleshooting equipment using manuals that combine schematics, part lists, and procedural text.

- Regulatory Compliance: Interpreting government forms or standards documents that mix structured tables with explanatory paragraphs.

If your team deals with queries like “What safety thresholds are shown in the pressure-time graph on page 22, and how do they compare to the values listed in Appendix B?”, ViDoRAG is purpose-built for this.

Getting Started: A Modular, Flexible Workflow

ViDoRAG is designed for easy integration. Here’s how to adopt it in your pipeline:

Step 1: Prepare Your Document Corpus

Convert PDFs to images using the provided pdf2images.py. Optionally, run OCR—either with traditional engines (ocr_triditional.py) or VLMs (ocr_vlms.py)—to enrich image metadata with recognized text.

Step 2: Build a Multi-Modal Index

Using LlamaIndex under the hood, ViDoRAG ingests your documents via ingestion.py, embedding each page with your chosen vision-language and text models.

Step 3: Run Retrieval—Choose Your Mode

- Text-only: Useful for purely semantic queries.

- Visual-only: Best when layout or graphics dominate relevance.

- Hybrid (GMM-enabled): Recommended for mixed-modality queries—automatically balances modalities.

All options are exposed via clean Python APIs in search_engine.py.

Step 4: Generate Answers with Multi-Agent Reasoning

Pass retrieved images and text to the ViDoRAG_Agents class, which orchestrates the iterative reasoning loop using your preferred VLM (e.g., Qwen-VL-Max or GPT-4o).

The entire pipeline is modular: you can swap in your own embedding models, VLMs, or even replace the agent logic while reusing the retrieval core.

Limitations and Real-World Considerations

While powerful, ViDoRAG isn’t a silver bullet:

- Model Dependencies: Performance relies on strong external models (e.g., ColQwen for embedding, Qwen-VL for generation).

- Preprocessing Overhead: Requires PDF-to-image conversion and optional OCR—adding steps compared to pure-text RAG.

- Compute Cost: The iterative agent loop increases latency versus single-pass generation, though it’s justified for complex queries.

- Query Scope: Optimized for multi-hop, reasoning-heavy questions; overkill for simple lookups like “What’s the author’s name?”

For production use, expect to fine-tune retrieval thresholds or agent iteration depth based on your domain’s document complexity and latency budget.

Summary

ViDoRAG redefines what’s possible with RAG on visually rich documents. By unifying adaptive hybrid retrieval with iterative multi-agent reasoning, it tackles the real-world messiness of PDFs that blend text, tables, and graphics—something traditional RAG pipelines simply can’t handle. If your projects involve extracting insights from complex, multimodal documents at scale, ViDoRAG offers a robust, open-source foundation to build upon. With its modular design and strong benchmark performance, it’s a compelling choice for technical teams ready to move beyond text-only QA.