Generating high-quality images from text prompts has become remarkably powerful thanks to diffusion models like Stable Diffusion. Yet, for many…

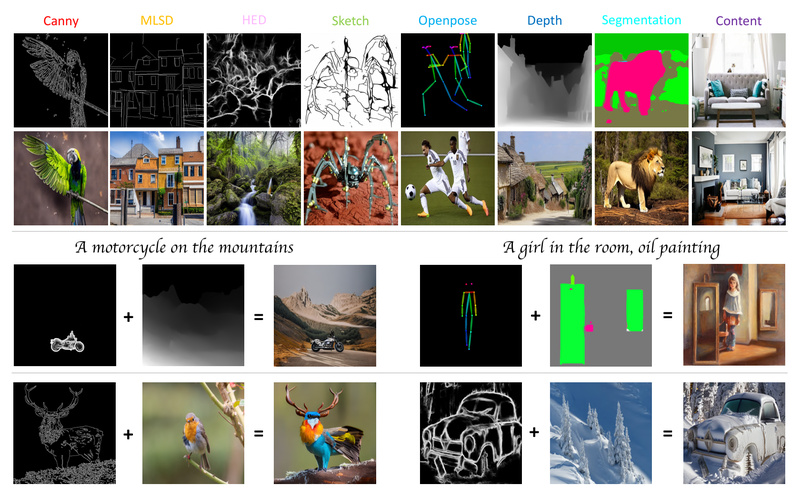

Controllable Diffusion Models

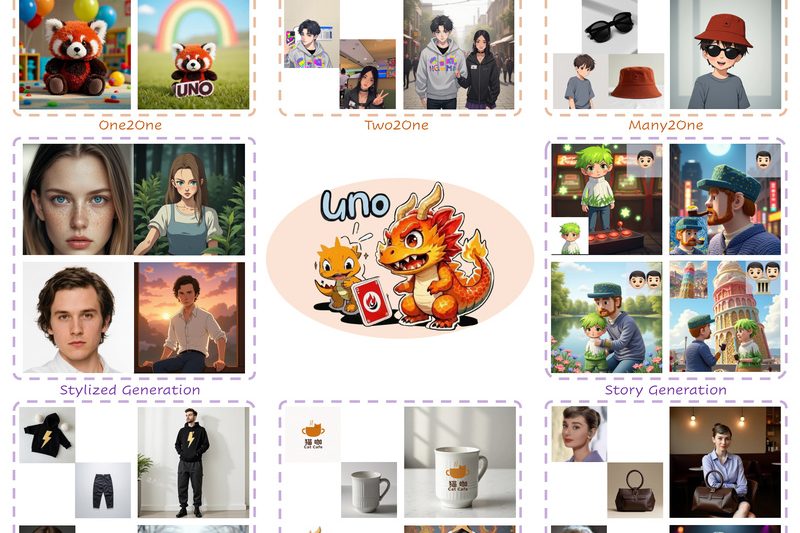

Less-to-More Generalization: Unlock Controllable, Consistent Multi-Subject Image Generation with UNO 1337

Subject-driven image generation—where users provide one or more reference images of specific objects to guide the creation of new scenes—is…

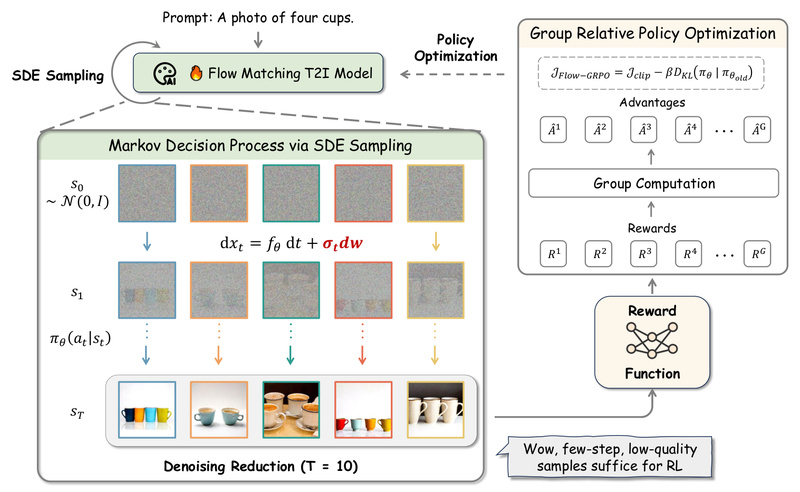

Flow-GRPO: Boost Text-to-Image Accuracy with Online RL—Without Sacrificing Quality or Diversity 1720

If you’ve ever struggled with diffusion models failing to follow detailed prompts—like “a golden retriever sitting to the left of…