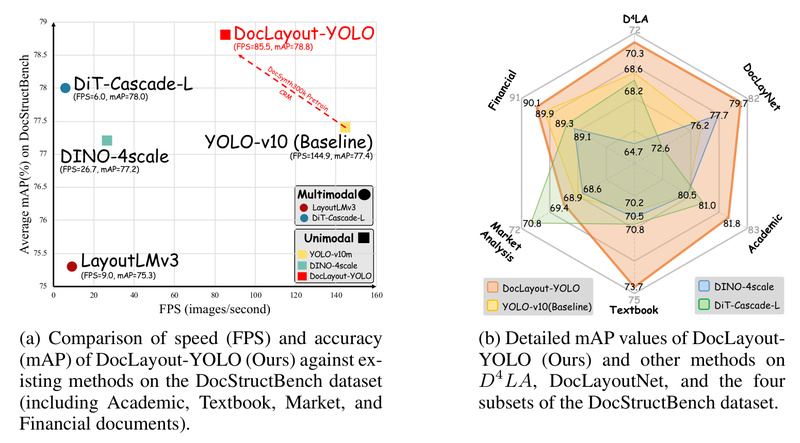

Document layout analysis (DLA) is a foundational task in building real-world document understanding systems—whether you’re extracting structured data from invoices,…

Document Understanding

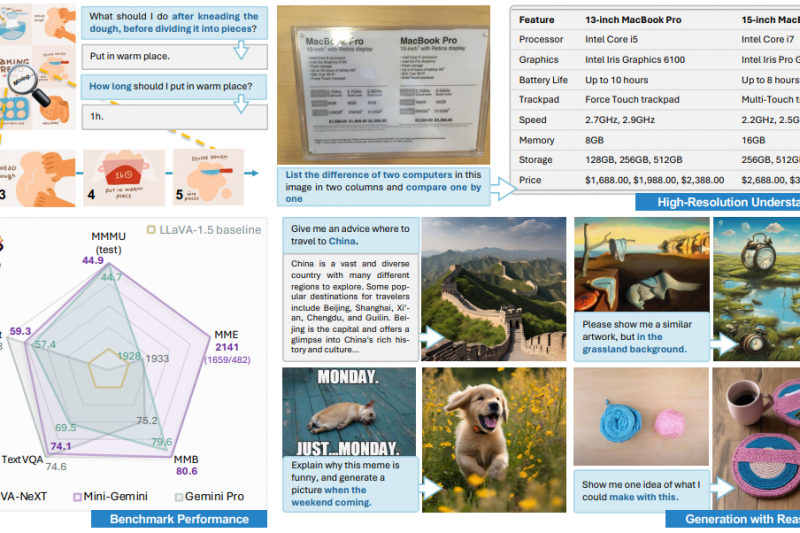

Mini-Gemini: Close the Gap with GPT-4V and Gemini Using Open, High-Performance Vision-Language Models 3323

In today’s AI landscape, multimodal systems that understand both images and language are no longer a luxury—they’re a necessity. Yet,…

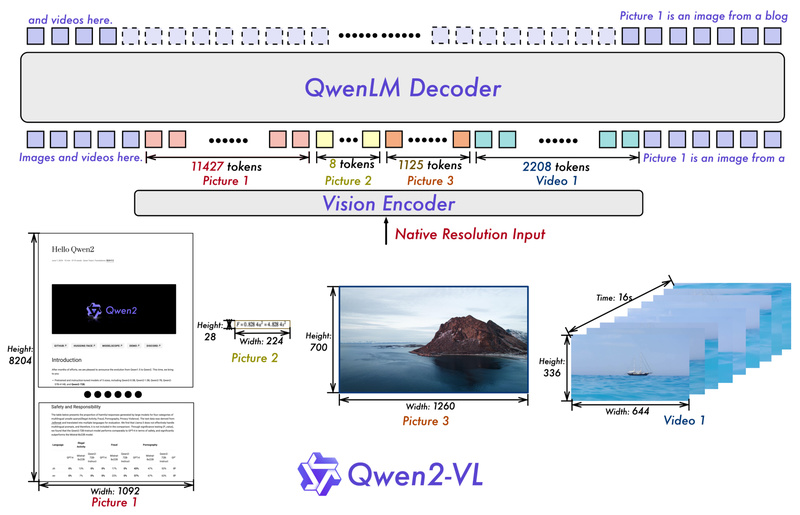

Qwen2-VL: Process Any-Resolution Images and Videos with Human-Like Visual Understanding 17241

Vision-language models (VLMs) are increasingly essential for tasks that require joint understanding of images, videos, and text—ranging from document parsing…

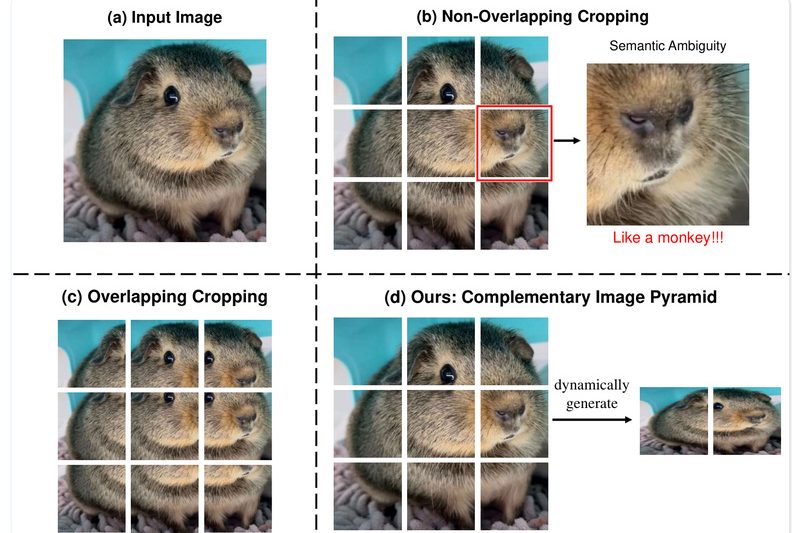

Mini-Monkey: Fixing Fragmented Vision in Lightweight Multimodal Models with Smart Multi-Scale Cropping 1923

When it comes to deploying multimodal large language models (MLLMs) in real-world applications—especially on cost-sensitive or edge devices—lightweight models are…

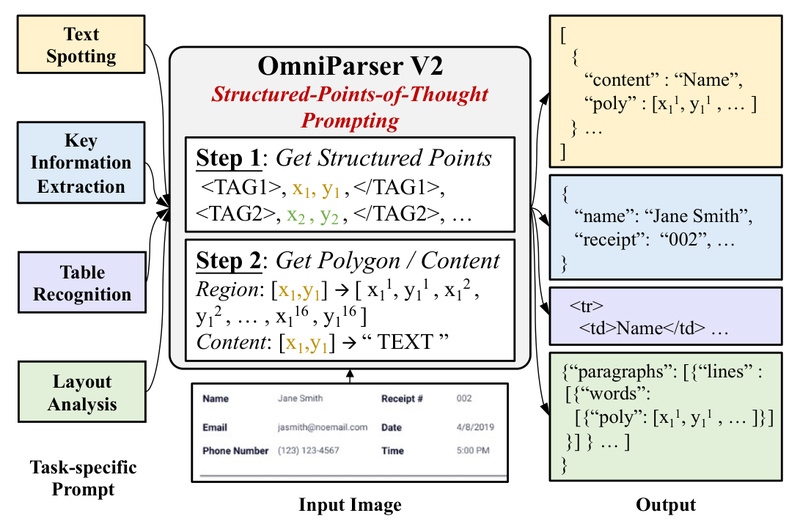

OmniParser V2: One Unified Model for Text Spotting, Table Recognition, and Document Understanding 1800

In today’s data-driven world, businesses and researchers routinely process documents—scanned invoices, forms, tables, and receipts—to extract structured information. Traditionally, this…

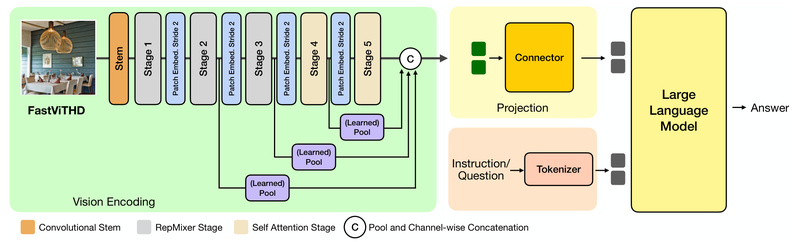

FastVLM: High-Resolution Vision-Language Inference with 85× Faster Time-to-First-Token and Minimal Compute Overhead 7052

Vision Language Models (VLMs) are increasingly central to real-world applications—from mobile assistants that read documents to AI systems that interpret…

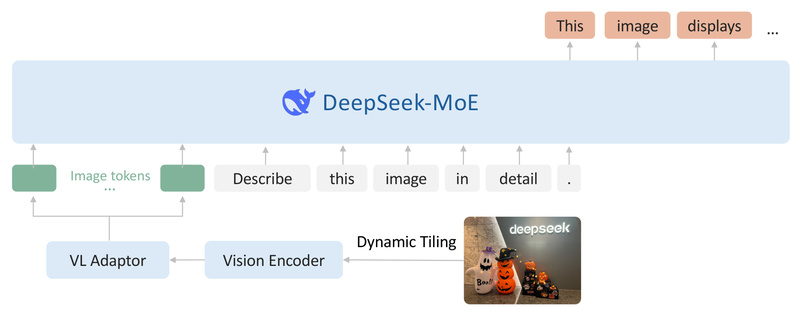

DeepSeek-VL2: High-Performance Vision-Language Understanding with Efficient Mixture-of-Experts Architecture 5072

DeepSeek-VL2 is an open-source, advanced vision-language model (VLM) built on a Mixture-of-Experts (MoE) architecture, engineered for robust multimodal understanding across…