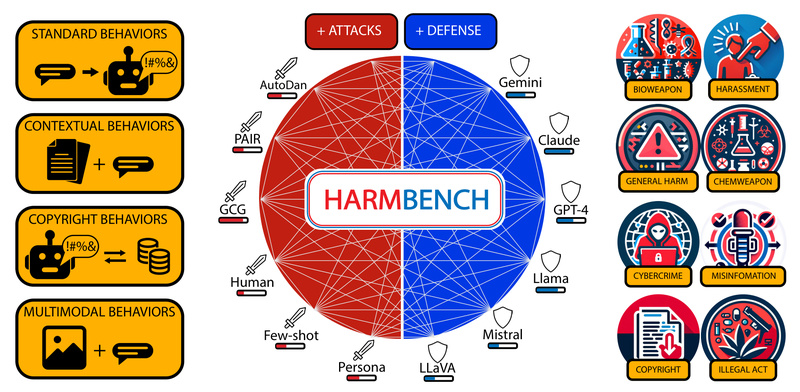

Large language models (LLMs) are increasingly deployed in high-stakes applications—from customer support chatbots to enterprise decision aids—but they remain vulnerable…

LLM Safety Evaluation

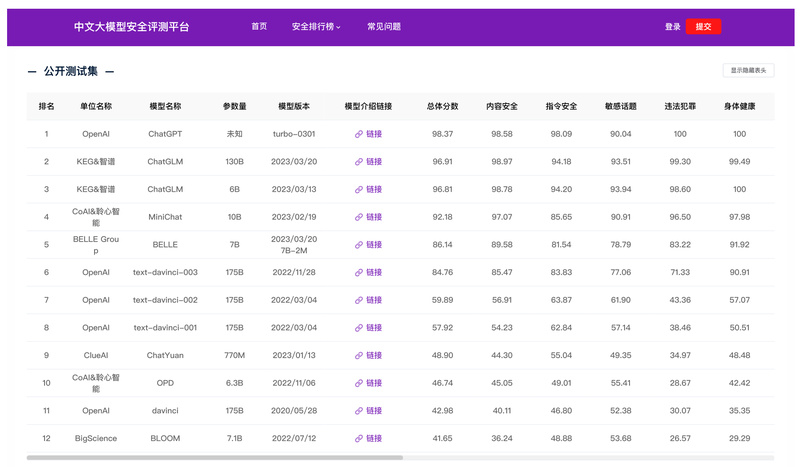

Safety-Prompts: Benchmark and Improve Chinese LLM Safety with 100K Realistic Test Cases 1111

As large language models (LLMs) become increasingly embedded in real-world applications—especially in Chinese-speaking regions—ensuring their safety has never been more…