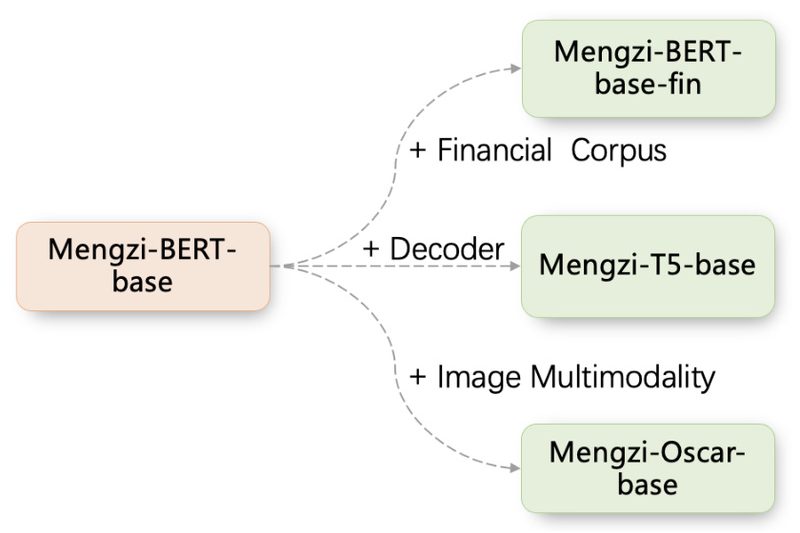

In recent years, pre-trained language models (PLMs) have revolutionized natural language processing (NLP), delivering state-of-the-art results across a wide spectrum…

Multimodal Learning

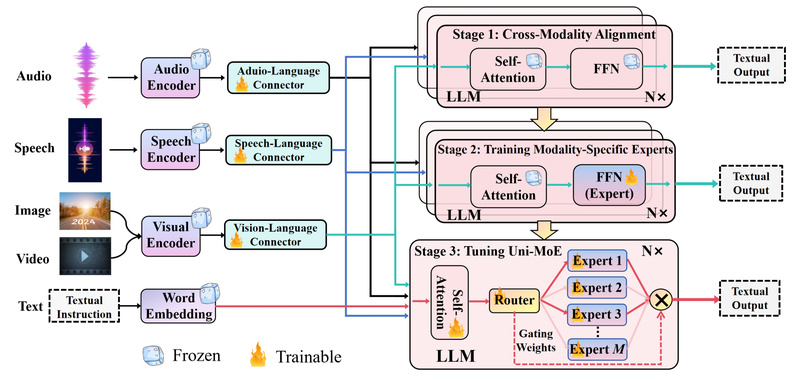

Uni-MoE: Build One Unified Multimodal AI Instead of Five Separate Models 773

Imagine managing a project that needs to understand speech, analyze images, interpret video frames, and respond to written prompts—all within…

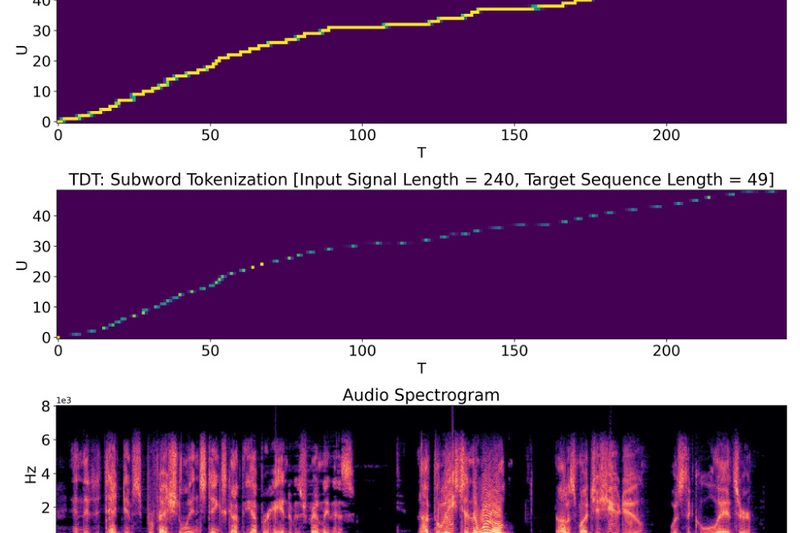

NeMo: Build Production-Grade Speech, LLM, and Multimodal AI Faster with NVIDIA’s Optimized Framework 16305

NVIDIA NeMo is a cloud-native, open-source framework designed for developers, research engineers, and technical decision-makers who need to build, customize,…

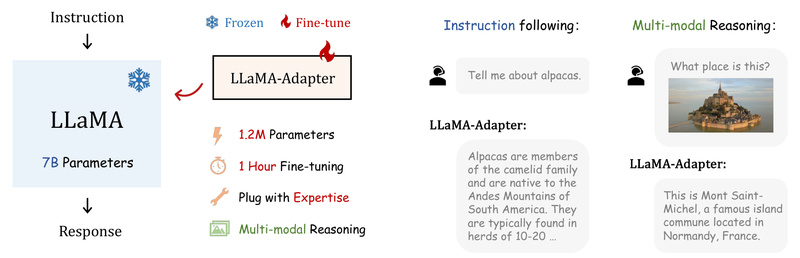

LLaMA-Adapter: Efficiently Transform LLaMA into Instruction-Following or Multimodal AI with Just 1.2M Parameters 5907

If you’re working on a project that requires a capable language model—but lack the GPU budget, time, or infrastructure for…

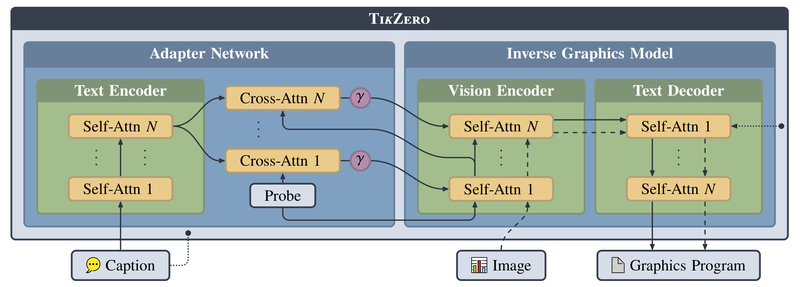

TikZero: Generate Editable, Precise Scientific Figures from Text—No Paired Training Data Needed 1650

Creating publication-ready scientific diagrams often requires deep familiarity with vector graphics tools or typesetting systems like LaTeX and TikZ. While…

Meta-Transformer: One Unified Model for 12 Modalities—No Paired Data Needed 1644

In today’s AI landscape, building systems that understand multiple types of data—text, images, audio, video, time series, and more—is increasingly…

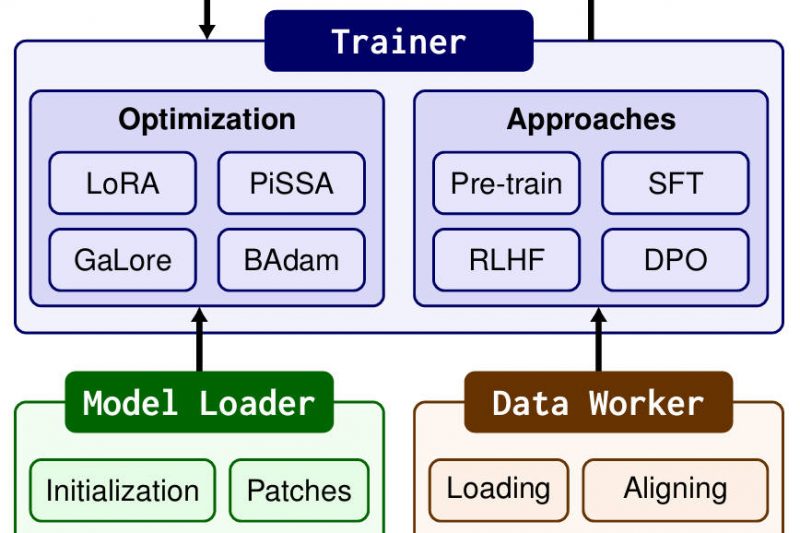

LlamaFactory: Fine-Tune 100+ Language Models Effortlessly—No Coding Required 63856

Fine-tuning large language models (LLMs) used to be a complex, time-consuming endeavor—requiring deep expertise in deep learning frameworks, custom code…