For teams building real-world AI applications that combine vision and language—whether it’s parsing scanned documents, analyzing instructional videos, or creating…

Multimodal Reasoning

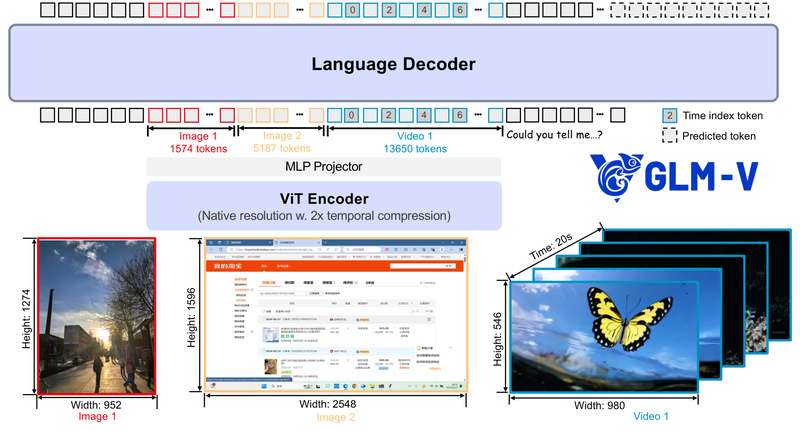

GLM-V: Open-Source Vision-Language Models for Real-World Multimodal Reasoning, GUI Agents, and Long-Context Document Understanding 1899

If your team is building AI applications that need to see, reason, and act—like desktop assistants that interpret screenshots, UI…

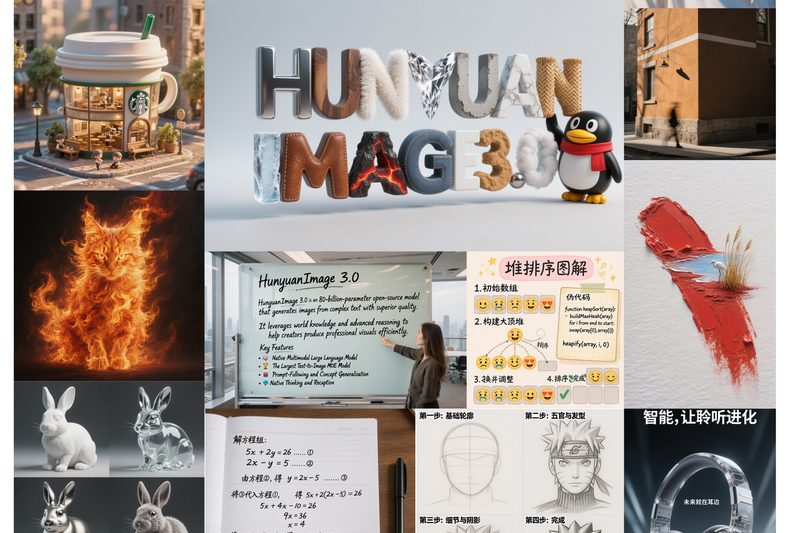

HunyuanImage-3.0: The Largest Open-Source Multimodal Image Generator with Native Reasoning and MoE Architecture 2562

HunyuanImage-3.0 is a groundbreaking open-source image generation model developed by Tencent. Unlike traditional diffusion-based approaches, it builds a native multimodal…

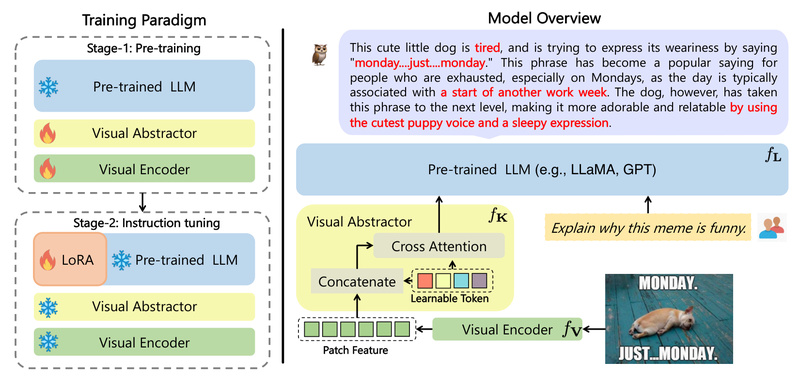

mPLUG-Owl: Modular Multimodal AI for Real-World Vision-Language Tasks 2537

In today’s AI-driven product landscape, the ability to understand both images and text isn’t just a research novelty—it’s a practical…

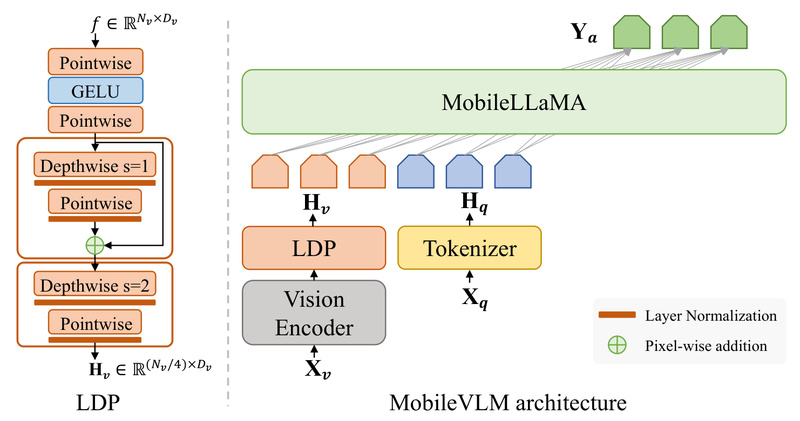

MobileVLM: High-Performance Vision-Language AI That Runs Fast and Privately on Mobile Devices 1314

MobileVLM is a purpose-built vision-language model (VLM) engineered from the ground up for on-device deployment on smartphones and edge hardware.…

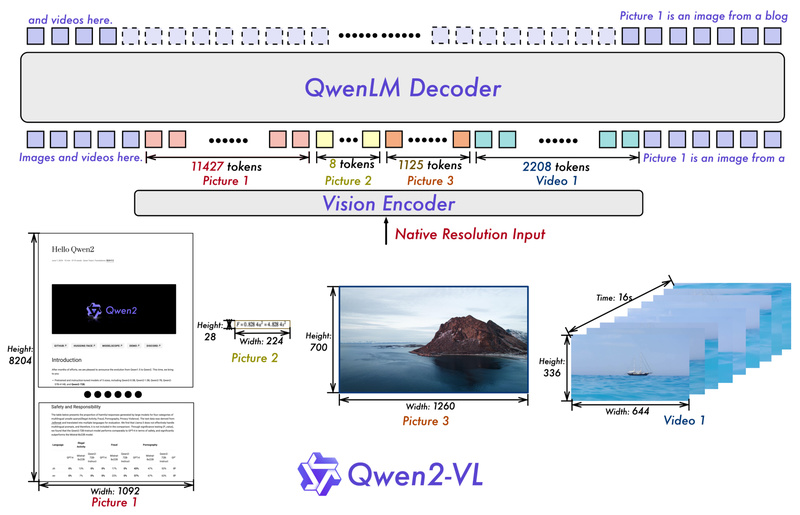

Qwen2-VL: Process Any-Resolution Images and Videos with Human-Like Visual Understanding 17241

Vision-language models (VLMs) are increasingly essential for tasks that require joint understanding of images, videos, and text—ranging from document parsing…

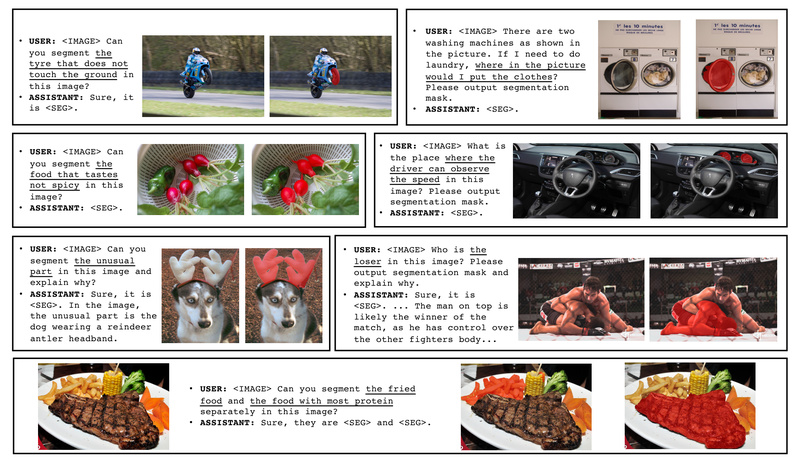

LISA: Segment Anything by Understanding What You *Really* Mean 2523

Imagine asking a computer vision system to “segment the object that makes the woman stand higher” or “show me the…

MME: The First Comprehensive Benchmark to Objectively Evaluate Multimodal Large Language Models 17004

Multimodal Large Language Models (MLLMs) have captured the imagination of researchers and developers alike—promising capabilities like generating poetry from images,…

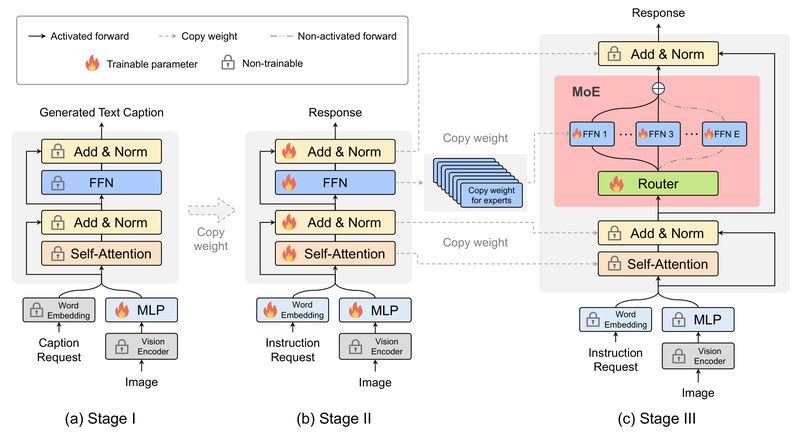

MoE-LLaVA: High-Performance Vision-Language Understanding with Sparse, Efficient Inference 2282

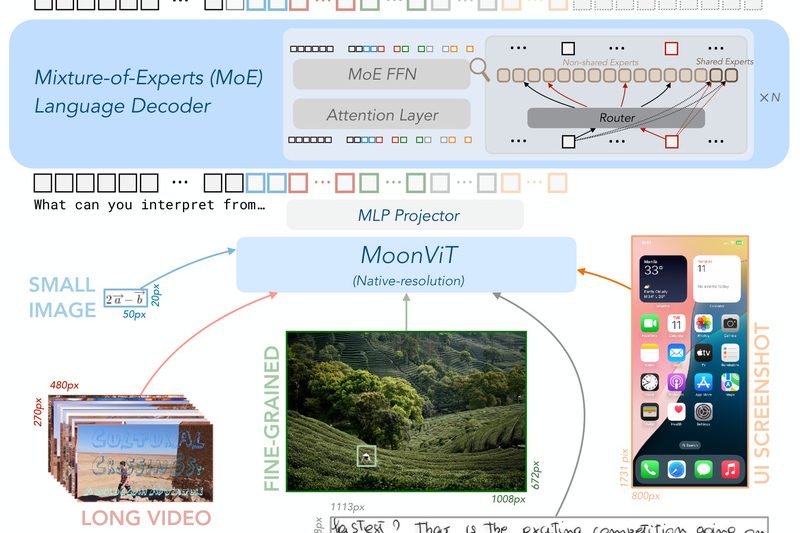

MoE-LLaVA (Mixture of Experts for Large Vision-Language Models) redefines efficiency in multimodal AI by delivering performance that rivals much larger…

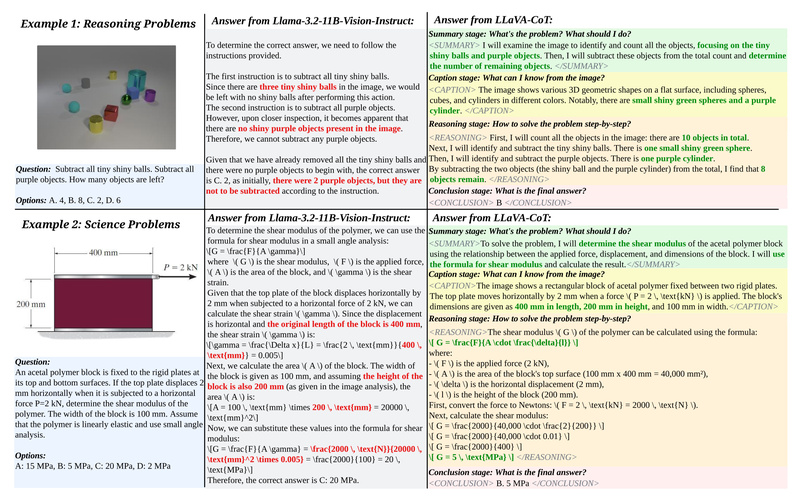

LLaVA-CoT: Step-by-Step Visual Reasoning for Reliable, Explainable Multimodal AI 2108

Most vision-language models (VLMs) today can describe what’s in an image—but they often falter when asked to reason about it.…