Retrieval-Augmented Generation (RAG) has become a go-to strategy for grounding large language model (LLM) responses in real-world knowledge. By pulling…

Retrieval-Augmented Generation

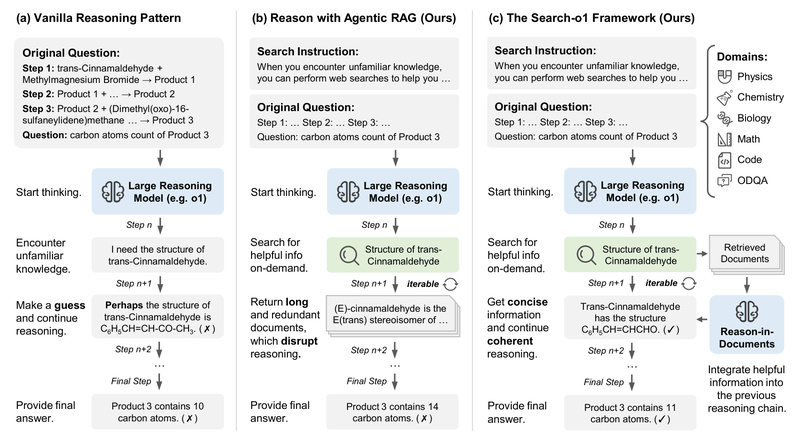

Search-o1: Boost Large Reasoning Models with On-Demand Knowledge Retrieval for Complex Problem Solving 1119

Large reasoning models (LRMs)—such as OpenAI’s o1—excel at multi-step logical reasoning, especially in science, math, and code-related tasks. But they…

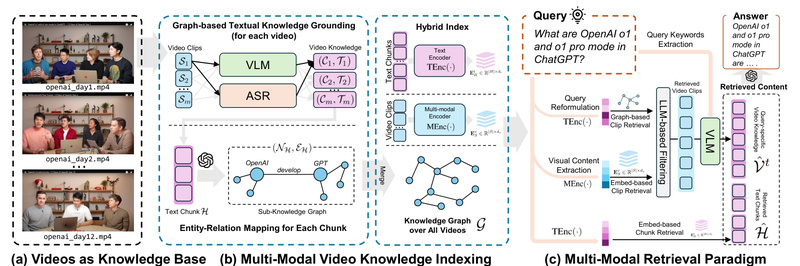

VideoRAG: Unlock Long-Form Video Understanding with Retrieval-Augmented Generation for AI-Powered Insights 1356

Imagine being able to ask questions like “What did the professor say about quantum entanglement in Lecture 3?” or “Show…

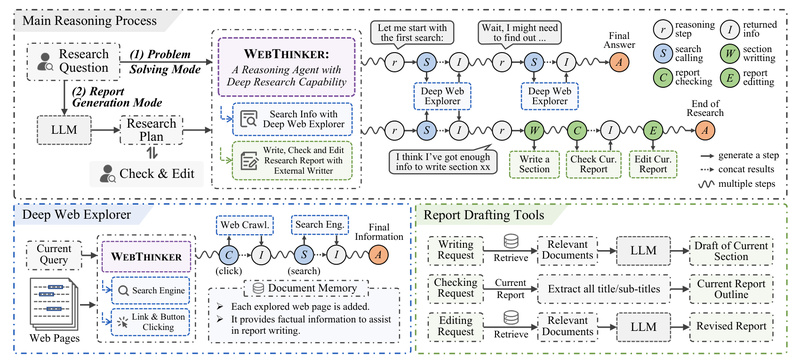

WebThinker: Autonomous Web Research for Large Reasoning Models That Need Real-Time, Multi-Source Knowledge Synthesis 1366

In today’s fast-evolving information landscape, even the most advanced large reasoning models (LRMs)—such as OpenAI-o1 or DeepSeek-R1—are constrained by their…

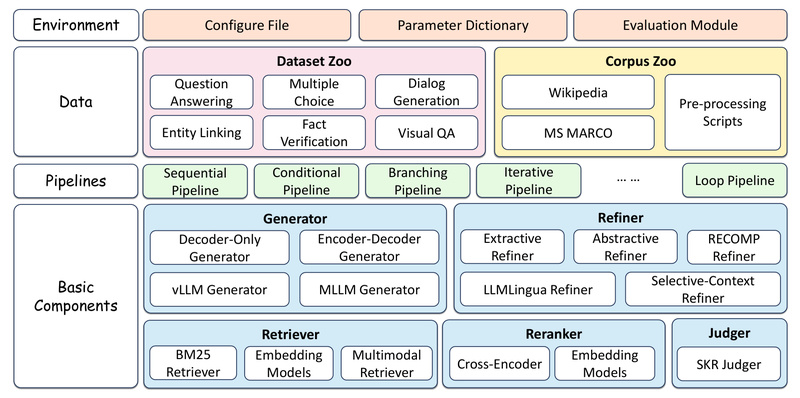

FlashRAG: A Modular, Lightweight Toolkit for Reproducible and Efficient Retrieval-Augmented Generation Research 3208

Retrieval-Augmented Generation (RAG) has emerged as a cornerstone technique for enhancing the factual grounding, knowledge scope, and reasoning capabilities of…

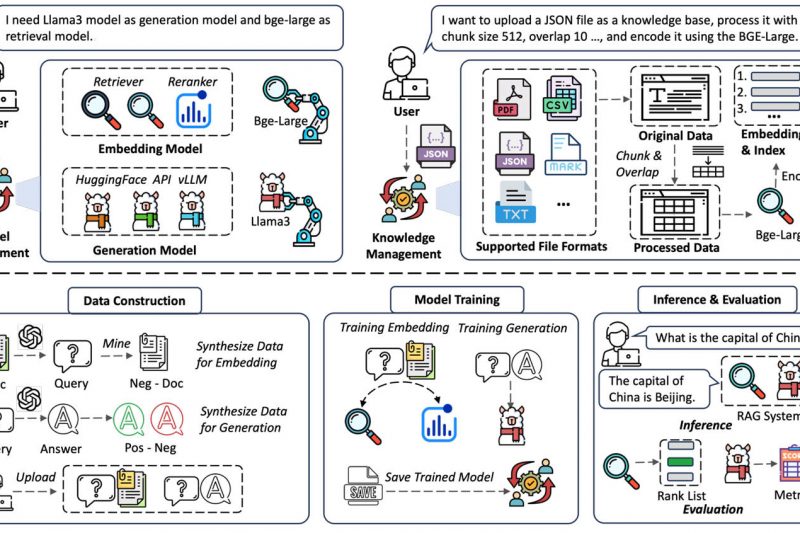

UltraRAG: Build Adaptive, Multimodal RAG Systems Without Writing Complex Code 2325

Retrieval-Augmented Generation (RAG) has become a cornerstone technique for grounding large language models (LLMs) in real-world knowledge. However, building effective…

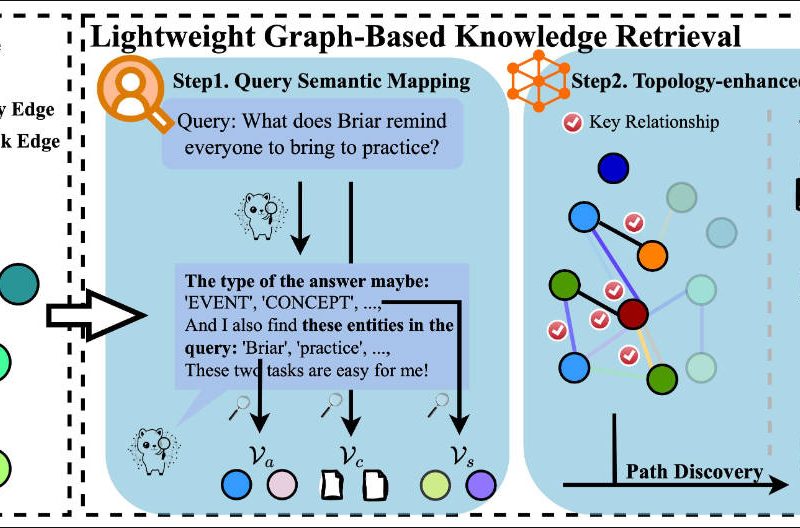

MiniRAG: Enable Small Language Models to Deliver Powerful RAG with Minimal Resources 1605

Retrieval-Augmented Generation (RAG) has become a cornerstone technique for grounding language models in factual knowledge. However, traditional RAG pipelines struggle…