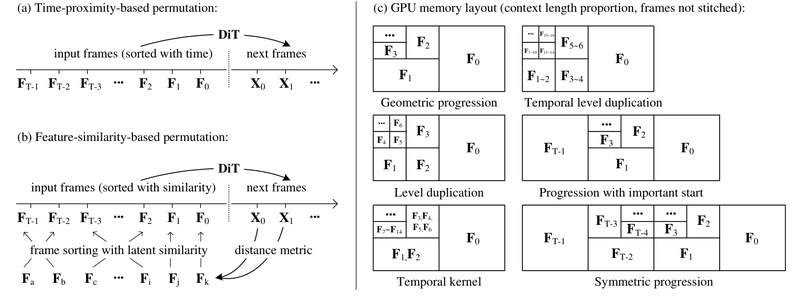

Creating long, coherent, and visually rich videos with AI has long been bottlenecked by computational complexity, memory constraints, and error…

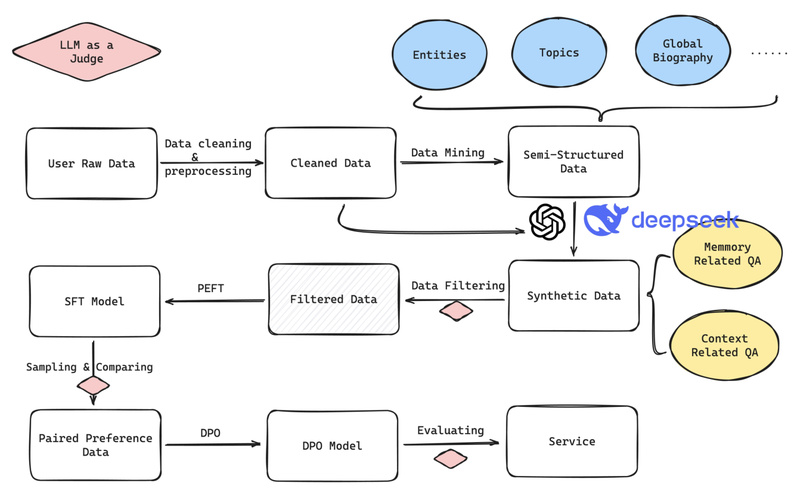

Second-Me: Your Private, Persistent AI Self That Eliminates Repetitive Data Entry and Reclaims Your Digital Identity 14752

In a world where AI assistants increasingly mediate our interactions with apps, services, and even other people, a critical problem…

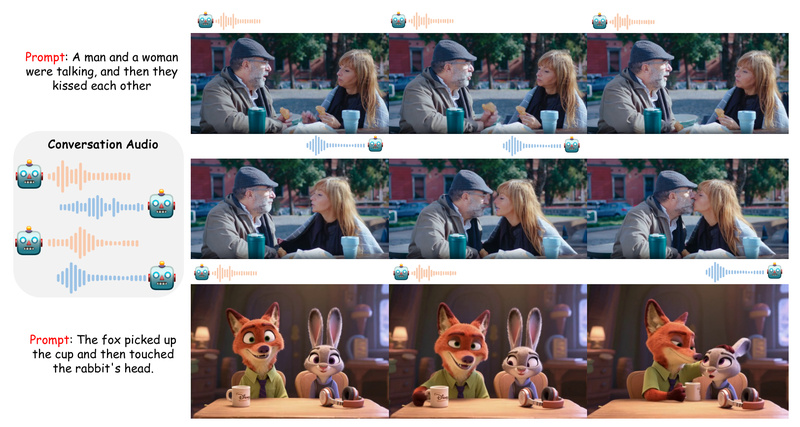

MultiTalk: Generate Realistic Multi-Person Conversational Videos from Audio with Precise Speaker Binding 2704

Creating lifelike videos of people talking has long been dominated by “talking head” technologies—tools that animate a single face from…

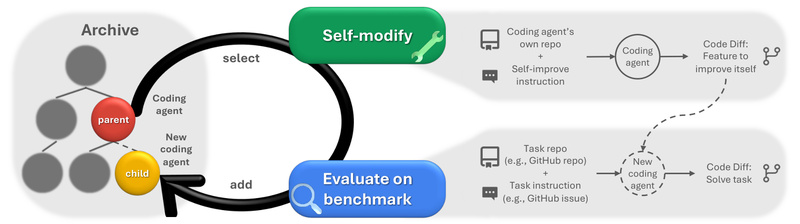

DGM: Self-Improving AI Agents That Evolve Their Own Code Without Human Redesign 1762

Most AI systems today are stuck in time. Their architectures, prompts, and tooling are all hand-crafted by engineers—once deployed, they…

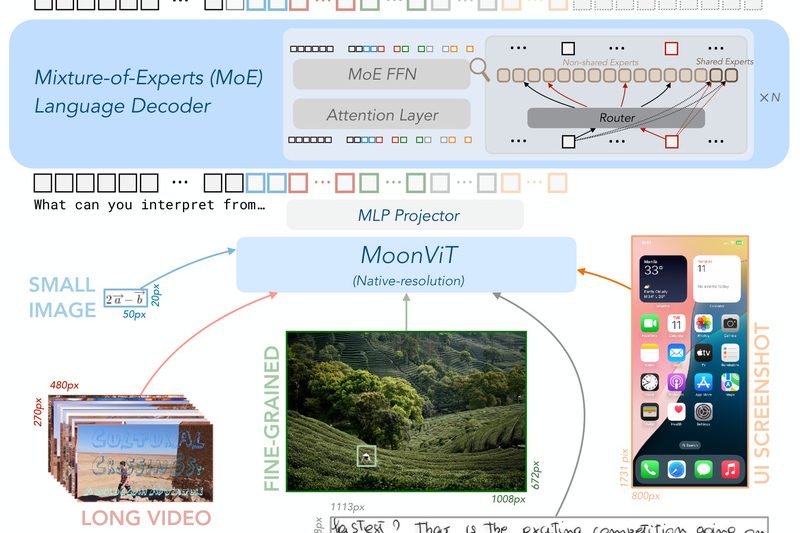

Kimi-VL: High-Performance Vision-Language Reasoning with Only 2.8B Active Parameters 1122

For teams building real-world AI applications that combine vision and language—whether it’s parsing scanned documents, analyzing instructional videos, or creating…

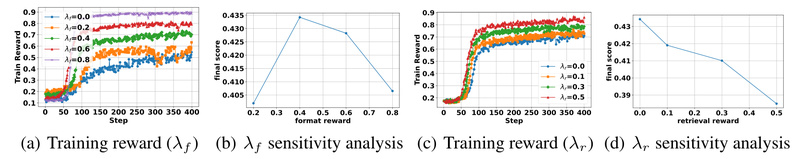

Search-R1: Train LLMs to Reason and Search Like Human Researchers Using Open-Source Reinforcement Learning 3614

In the rapidly evolving landscape of large language models (LLMs), a critical limitation persists: despite their impressive fluency, LLMs often…

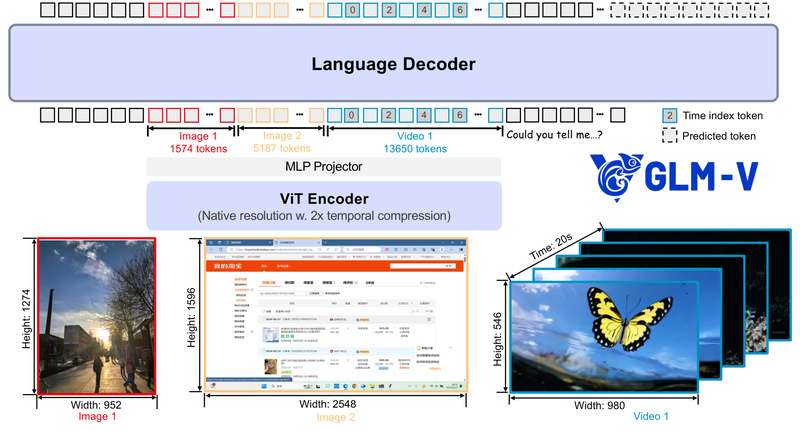

GLM-V: Open-Source Vision-Language Models for Real-World Multimodal Reasoning, GUI Agents, and Long-Context Document Understanding 1899

If your team is building AI applications that need to see, reason, and act—like desktop assistants that interpret screenshots, UI…

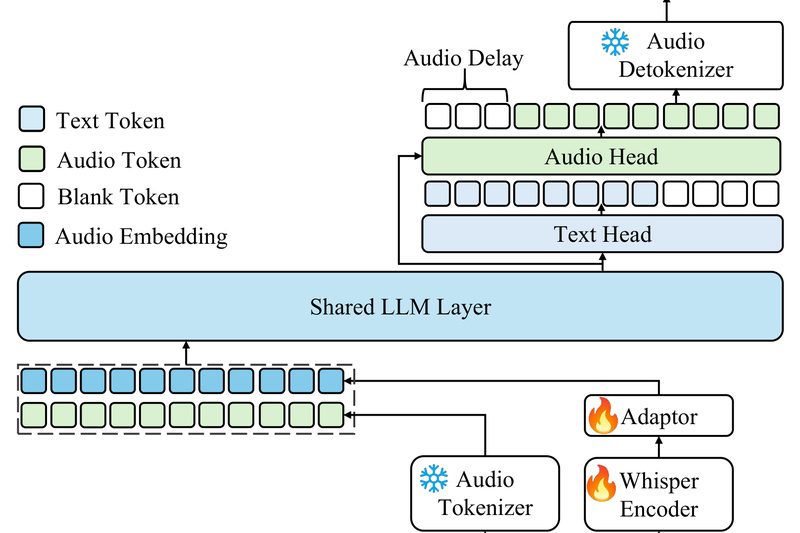

Kimi-Audio: A Unified, Open-Source Foundation Model for Speech, Sound, and Spoken Dialogue 4373

Building voice-enabled applications today often means stitching together separate models for speech recognition, sound classification, audio captioning, and spoken response…

LightZero: One Lightweight Framework for MCTS + Deep Reinforcement Learning Across Games, Control, and Multi-Task Planning 1481

If you’re evaluating tools for building intelligent agents that combine planning and learning—whether for games, robotics, scientific discovery, or general…

Step-Audio 2: Open-Source Multimodal LLM for Paralinguistic-Aware, Tool-Enhanced Speech Understanding and Conversation 1252

Step-Audio 2 is an open-source, end-to-end multimodal large language model (MLM) purpose-built for real-world audio understanding and natural speech conversation.…