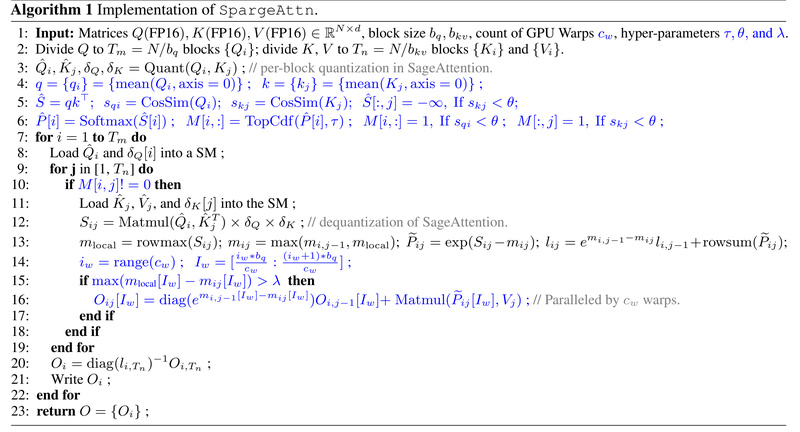

Large AI models—from language generators to video diffusion systems—are bottlenecked by the attention mechanism, whose computational cost scales quadratically with…

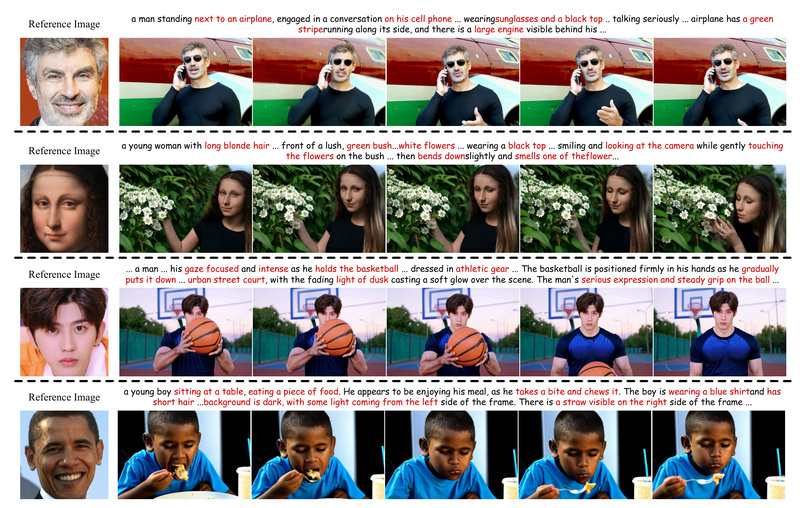

ConsisID: Generate Identity-Preserving Videos from Text and a Single Image—No Fine-Tuning Required 790

Creating videos that faithfully preserve a person’s identity from just a text prompt and a reference image has long been…

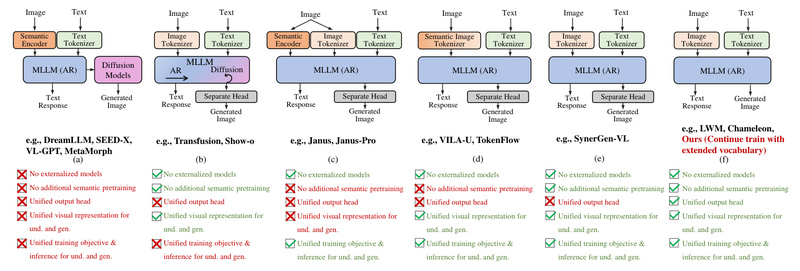

Liquid: One Unified Language Model for Text and Images—No CLIP, No Compromises 633

What if a single large language model (LLM) could both understand and generate high-quality images—without relying on external vision encoders…

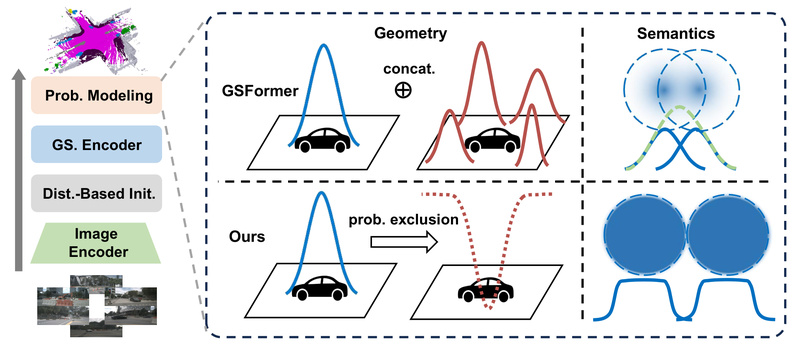

GaussianFormer-2: Efficient 3D Semantic Occupancy Prediction for Vision-Centric Autonomous Driving 574

In autonomous driving systems that rely primarily on camera inputs—so-called vision-centric setups—accurately understanding the 3D structure and semantics of the…

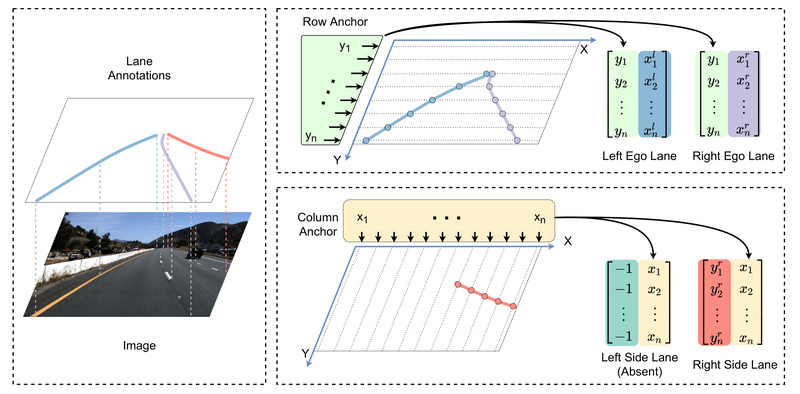

Ultra-Fast-Lane-Detection-V2: Real-Time, Robust Lane Detection for Autonomous Driving and ADAS Systems 759

Lane detection is a foundational capability in autonomous driving and advanced driver-assistance systems (ADAS). Traditional approaches often rely on pixel-wise…

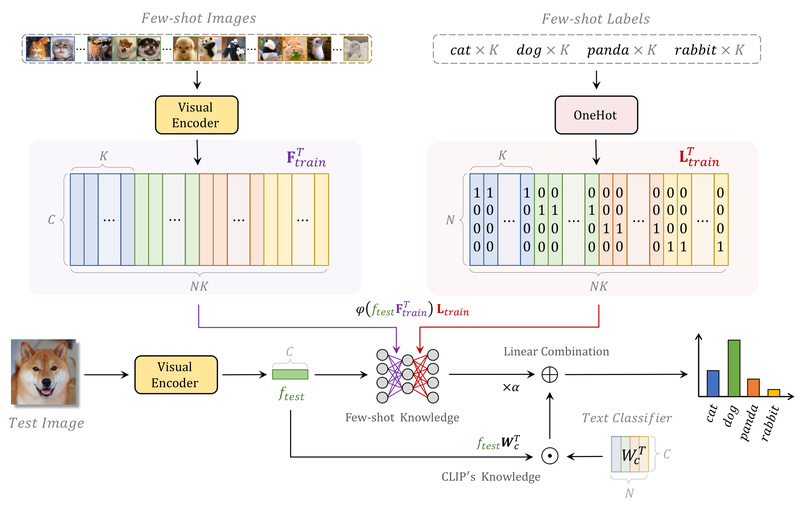

Tip-Adapter: Boost Few-Shot Image Classification Without Any Training 647

In the era of foundation models, CLIP (Contrastive Language–Image Pretraining) has revolutionized how we approach vision-language tasks—especially zero-shot image classification.…

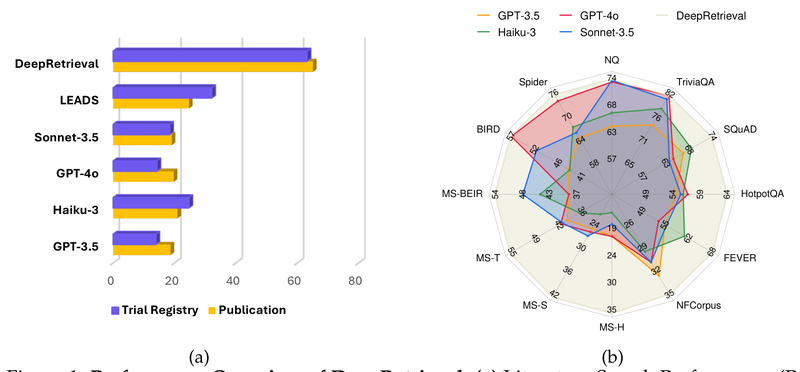

DeepRetrieval: Boost Search Accuracy by 2.6× Without Any Labeled Data—Powered by Reinforcement Learning 679

Imagine you’re building a retrieval-augmented generation (RAG) system, a scientific literature assistant, or a natural-language interface to a clinical trial…

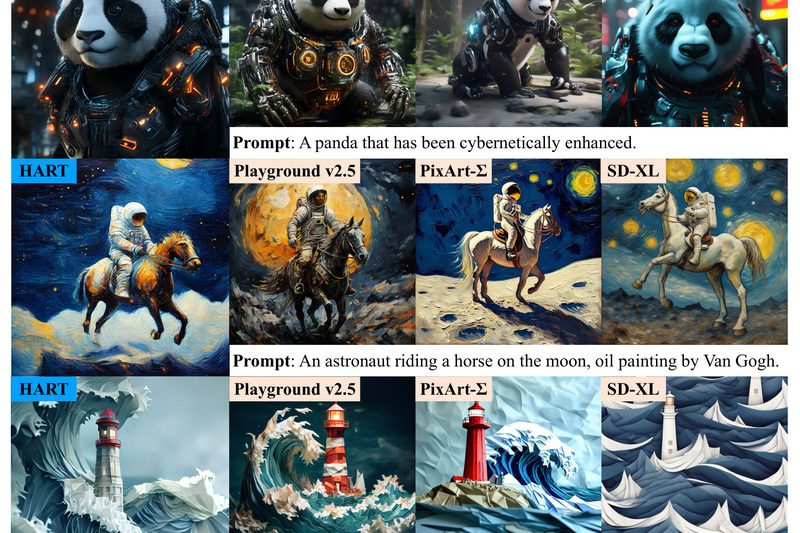

HART: Generate 1024×1024 Images Faster and More Efficiently Than Diffusion Models 635

For teams building AI-powered visual applications—whether in creative tools, digital content platforms, or rapid prototyping—the trade-off between image quality, speed,…

X-Pose: Detect Any Keypoint Across Humans, Animals, and Objects with One Unified Model 728

Keypoint detection—identifying specific, semantically meaningful points on objects like joints on a human body, facial landmarks on an animal, or…

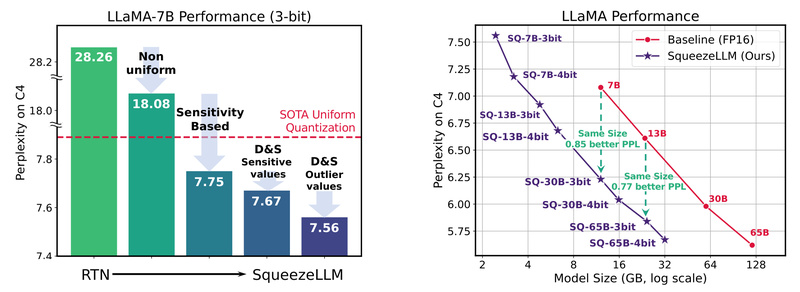

SqueezeLLM: Deploy High-Accuracy LLMs in Half the Memory Without Sacrificing Performance 704

Deploying large language models (LLMs) like LLaMA, Mistral, or Vicuna often demands multiple high-end GPUs, complex inference pipelines, and substantial…