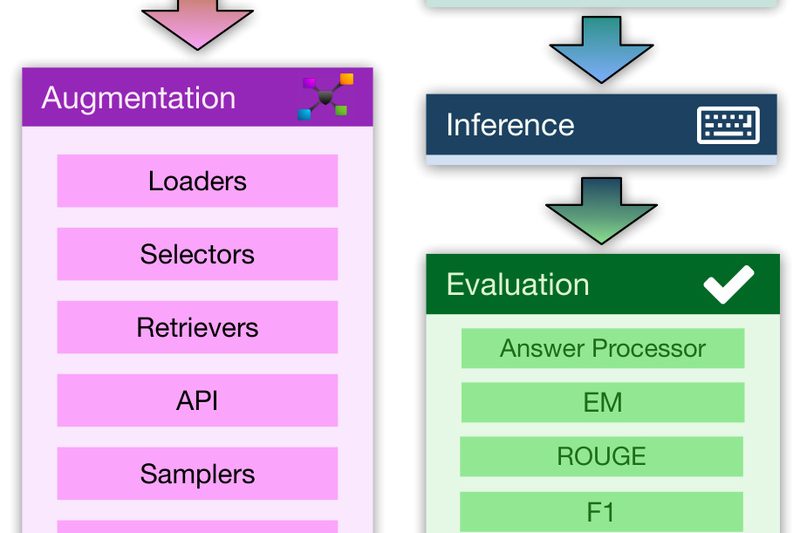

Building effective Retrieval-Augmented Generation (RAG) systems is notoriously difficult. Practitioners must juggle data preparation, retrieval integration, prompt engineering, model fine-tuning,…

Parameter-Efficient Fine-Tuning

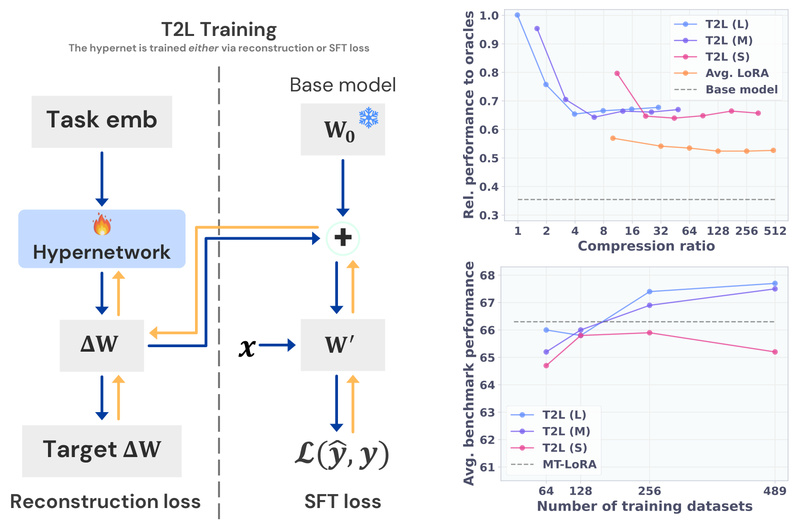

Text-to-LoRA: Instantly Customize LLMs with Plain English—No Training or Datasets Required 889

Large language models (LLMs) are powerful, but adapting them to specific tasks often demands significant effort: collecting labeled data, tuning…

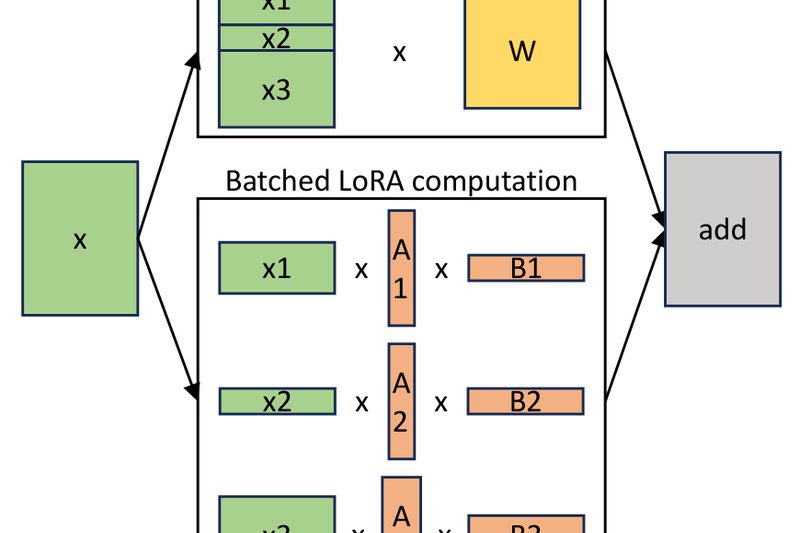

S-LoRA: Serve Thousands of Task-Specific LLMs Efficiently on a Single GPU 1879

Deploying dozens—or even thousands—of fine-tuned large language models (LLMs) has traditionally been a costly and complex endeavor. Each adapter typically…

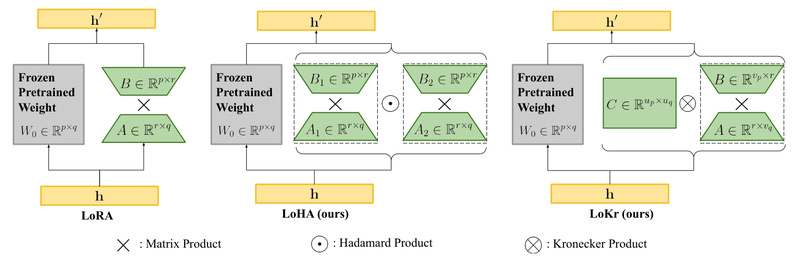

LyCORIS: Customize Stable Diffusion Without Retraining the Whole Model – Flexible, Lightweight Fine-Tuning for Text-to-Image Generation 2413

If you’re working with text-to-image models like Stable Diffusion, you’ve likely faced the trade-off between customization and efficiency. Full fine-tuning…

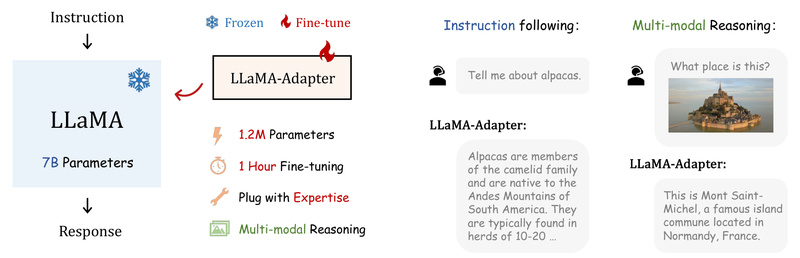

LLaMA-Adapter: Efficiently Transform LLaMA into Instruction-Following or Multimodal AI with Just 1.2M Parameters 5907

If you’re working on a project that requires a capable language model—but lack the GPU budget, time, or infrastructure for…

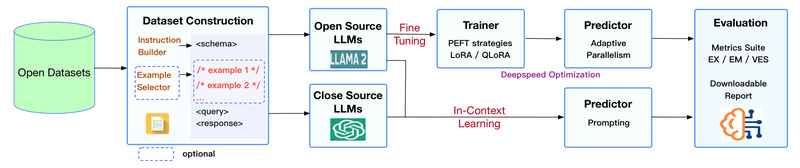

DB-GPT-Hub: Fine-Tune LLMs for Accurate Text-to-SQL Without Breaking the Bank 1945

If you’ve ever tried building a natural language interface to a relational database, you know the real bottleneck isn’t the…

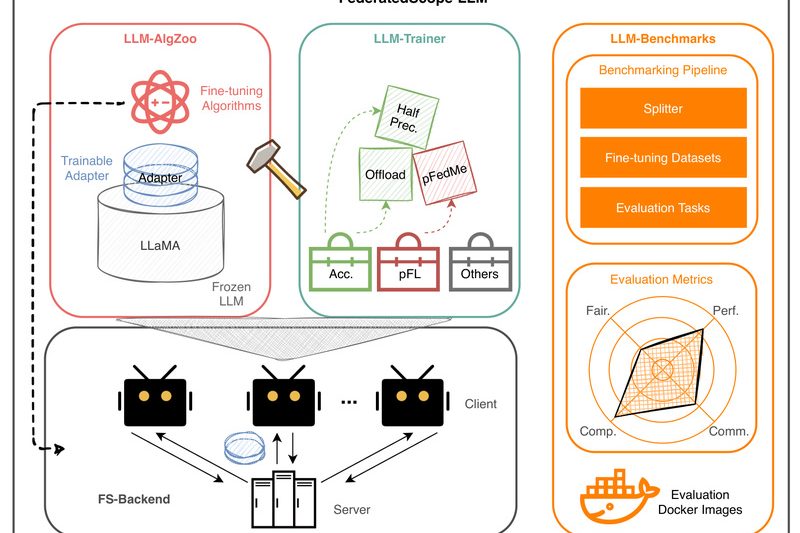

FederatedScope-LLM: Collaboratively Fine-Tune Large Language Models Without Sharing Private Data 1491

In today’s data-sensitive world, organizations increasingly want to harness the power of large language models (LLMs) while complying with strict…