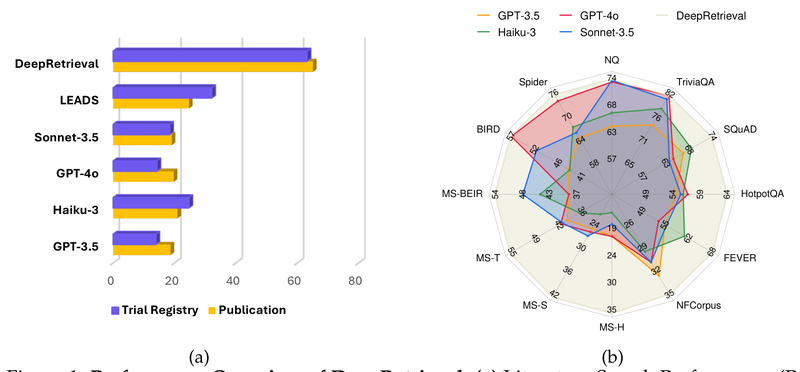

Imagine you’re building a retrieval-augmented generation (RAG) system, a scientific literature assistant, or a natural-language interface to a clinical trial…

Retrieval-Augmented Generation

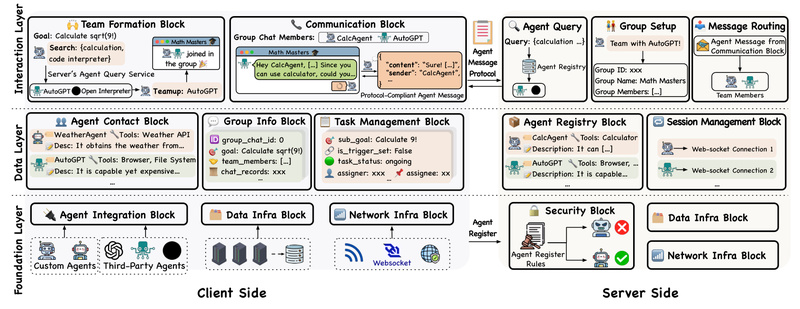

IoA: Enable Heterogeneous AI Agents to Collaborate Like the Internet — Solve Complex Tasks Beyond Single-Agent Limits 770

Imagine a world where AI agents—each with unique skills like web browsing, code execution, or data analysis—can autonomously find one…

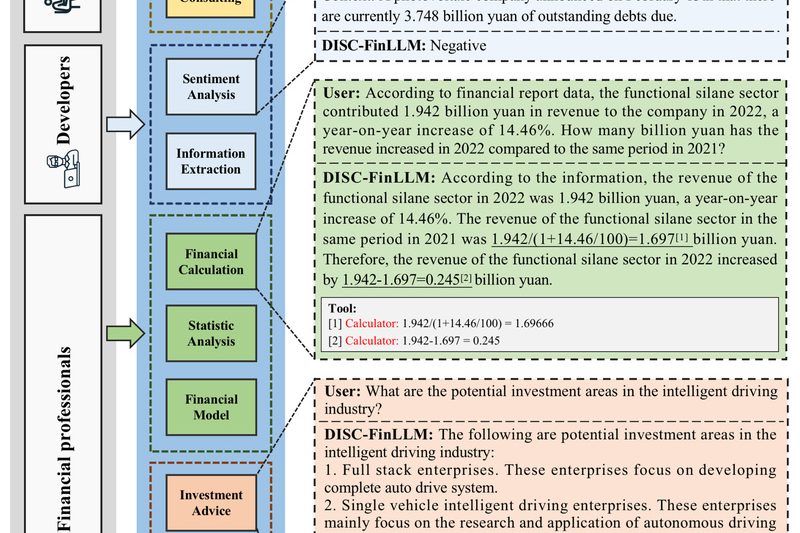

DISC-FinLLM: A Specialized Chinese Financial LLM for Accurate, Context-Aware Financial Intelligence 818

If you’re building AI-powered tools for the Chinese financial sector—whether for banking, fintech, investment research, or regulatory compliance—you’ve likely run…

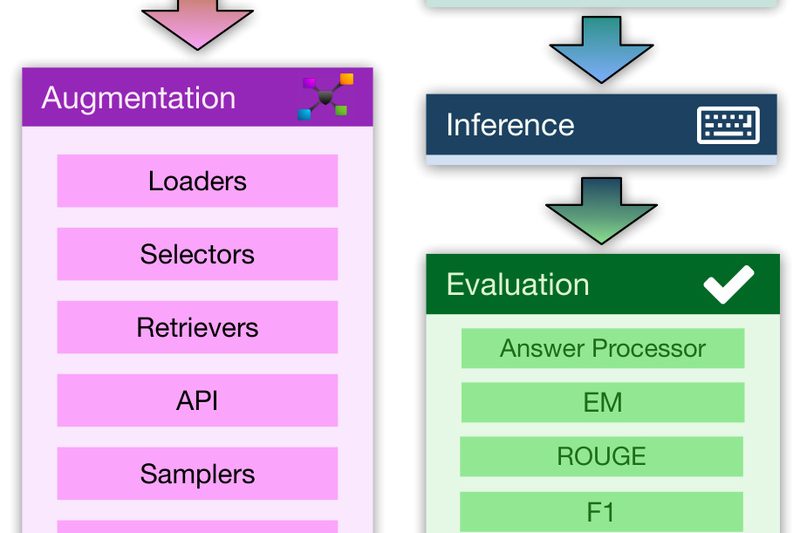

RAG Foundry (RAG-FiT): Build, Train, and Evaluate Domain-Specific RAG Systems Without the Complexity 750

Building effective Retrieval-Augmented Generation (RAG) systems is notoriously difficult. Practitioners must juggle data preparation, retrieval integration, prompt engineering, model fine-tuning,…

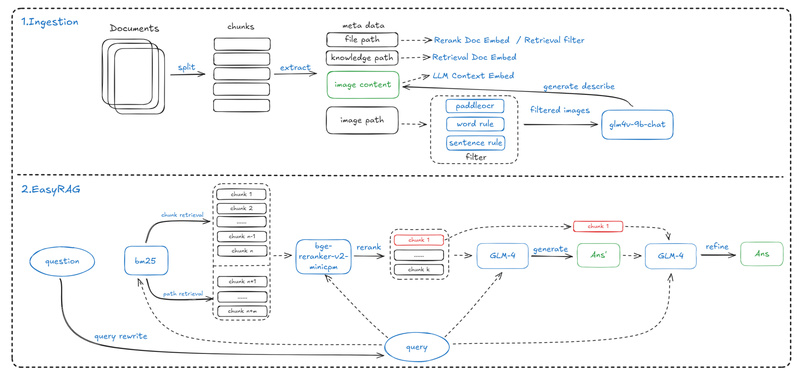

EasyRAG: A Lightweight, High-Accuracy RAG Framework for Resource-Constrained Network Operations and Enterprise QA 584

In today’s fast-paced IT and enterprise environments, teams increasingly rely on retrieval-augmented generation (RAG) systems to provide accurate, context-aware answers…

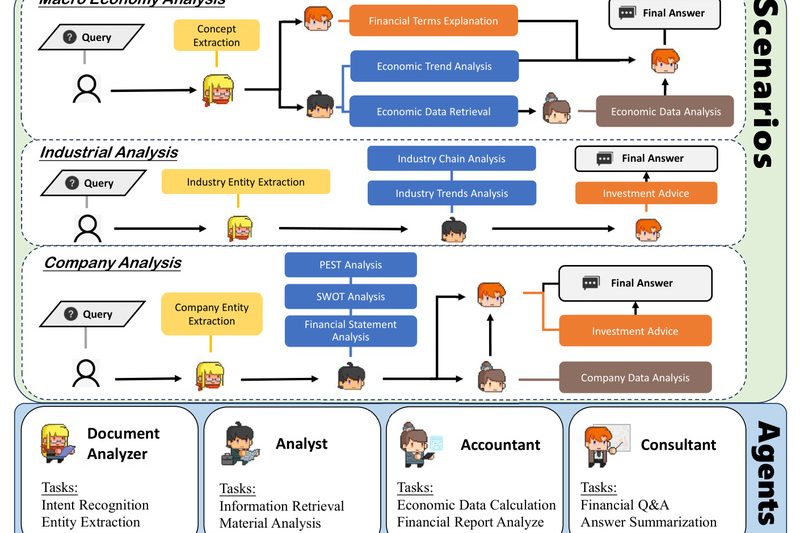

FinTeam: A Multi-Agent Financial Intelligence System That Generates Human-Accepted Reports and Outperforms GPT-4o 779

Financial analysis is rarely a solo endeavor. In real-world institutions—from investment banks to asset management firms—complex tasks like producing quarterly…

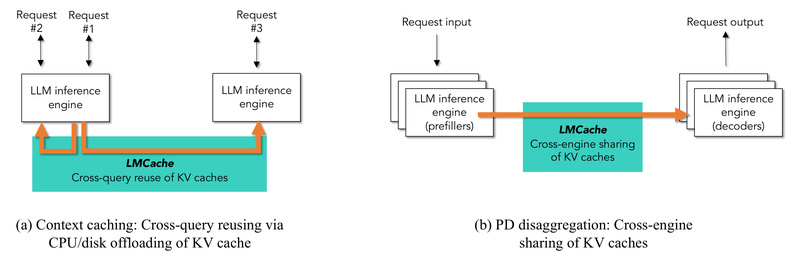

LMCache: Slash LLM Inference Latency and Multiply Throughput with Enterprise-Grade KV Cache Reuse 6375

Deploying large language models (LLMs) at scale introduces a familiar bottleneck: the growing size of Key-Value (KV) caches rapidly outpaces…

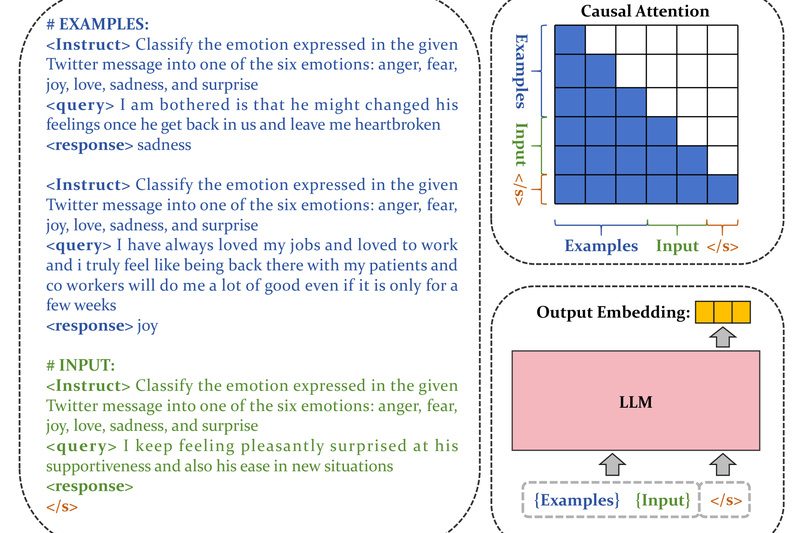

FlagEmbedding: High-Performance, Task-Aware Text Embeddings for Multilingual RAG and Semantic Search 10677

Modern AI applications—from customer support chatbots to enterprise knowledge retrieval—rely heavily on high-quality text embeddings to power semantic search and…

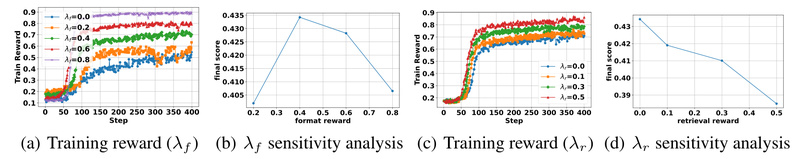

Search-R1: Train LLMs to Reason and Search Like Human Researchers Using Open-Source Reinforcement Learning 3614

In the rapidly evolving landscape of large language models (LLMs), a critical limitation persists: despite their impressive fluency, LLMs often…

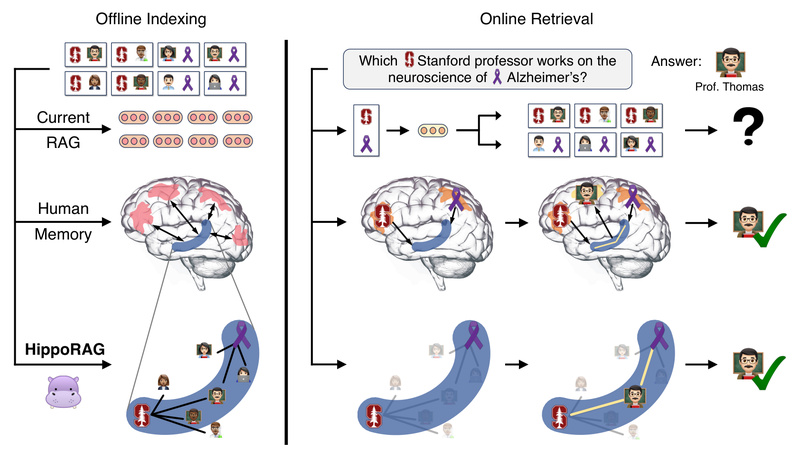

HippoRAG: Neurobiologically Inspired Long-Term Memory for LLMs That Solves Multi-Hop Reasoning and Continual Knowledge Integration 3056

Retrieval-Augmented Generation (RAG) has become a go-to architecture for grounding large language models (LLMs) in external knowledge. Yet, even the…