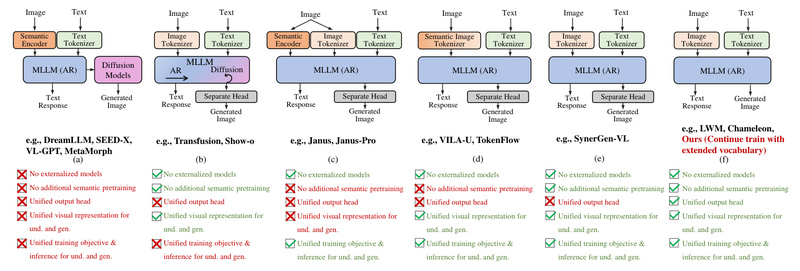

What if a single large language model (LLM) could both understand and generate high-quality images—without relying on external vision encoders…

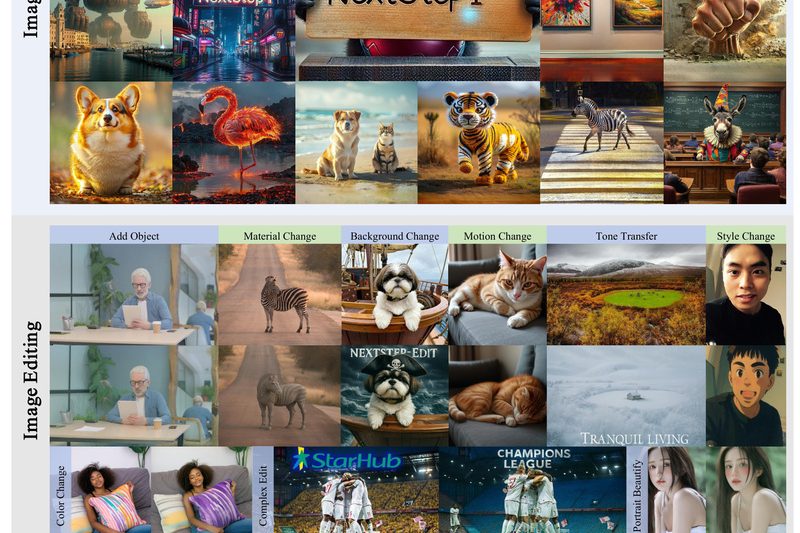

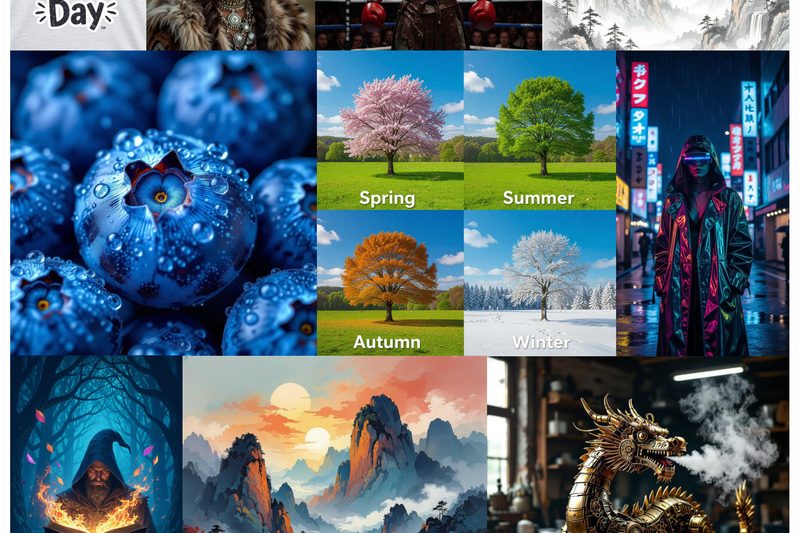

Text-to-Image Generation

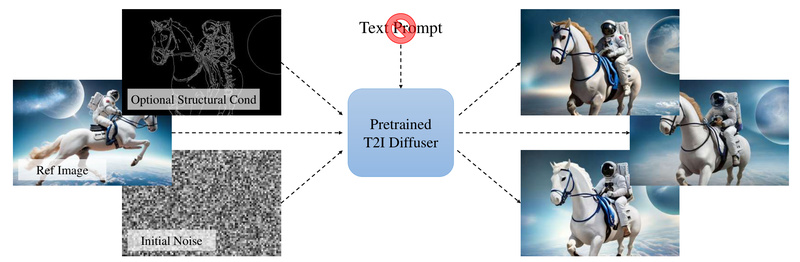

Prompt-Free Diffusion: Generate Images Without Writing a Single Text Prompt 757

Text-to-image (T2I) diffusion models have revolutionized creative workflows—but they come with a hidden bottleneck: prompt engineering. Describing an image in…

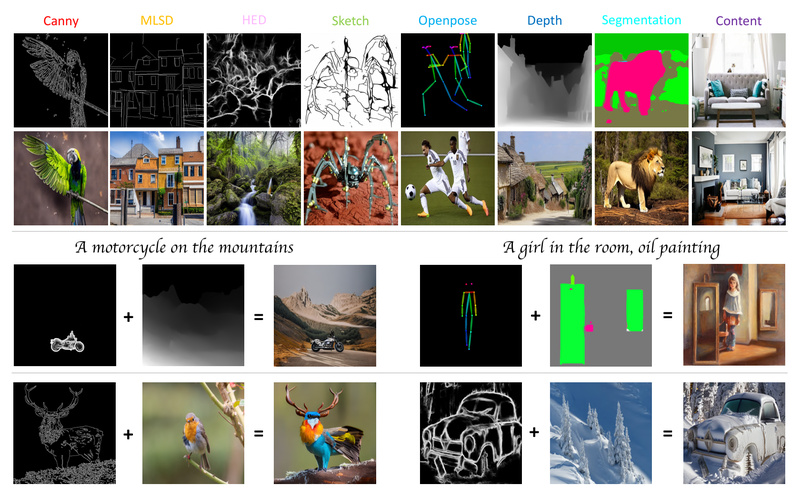

Uni-ControlNet: Unified Visual Control for Text-to-Image Generation Without Retraining Everything 664

Generating high-quality images from text prompts has become remarkably powerful thanks to diffusion models like Stable Diffusion. Yet, for many…

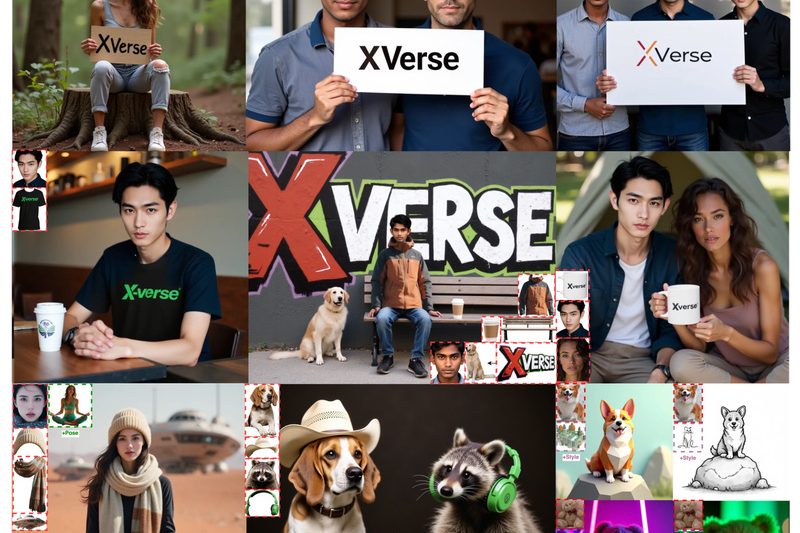

XVerse: Precise Multi-Subject Image Generation with Independent Identity and Attribute Control 603

Generating realistic images with multiple distinct subjects—each retaining their unique identity and visual attributes like pose, lighting, or clothing style—has…

NextStep-1: High-Fidelity Autoregressive Image Generation Without Diffusion or Discrete Token Loss 553

Autoregressive (AR) models have long dominated natural language generation, but applying the same step-by-step prediction approach to images has been…

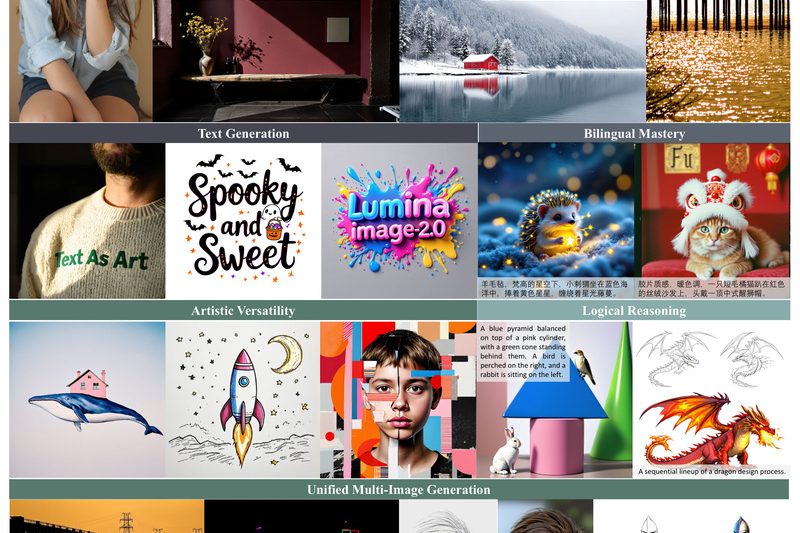

Lumina-Image 2.0: High-Quality, Efficient Text-to-Image Generation with Unified Architecture and Strong Open-Source Support 805

Lumina-Image 2.0 is a state-of-the-art open-source text-to-image (T2I) generation framework that delivers exceptional visual fidelity and prompt adherence while maintaining…

HiDream-I1: Generate and Edit High-Quality Images in Seconds with Sparse Diffusion Transformer 777

The rapid evolution of AI-driven image generation has unlocked incredible creative potential—but often at a steep cost: slow inference, massive…

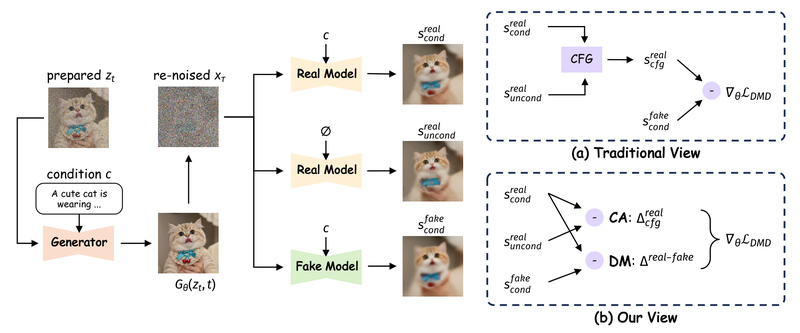

Decoupled DMD: Unlock Ultra-Fast, High-Quality Image Generation with 8-Step Distillation 8234

If you’re building or evaluating text-to-image systems that demand both speed and visual fidelity, Decoupled DMD offers a breakthrough in…

RPG-DiffusionMaster: Generate Complex, Compositional Images from Text—No Retraining Needed 1823

Text-to-image generation has made remarkable strides, yet even state-of-the-art models like DALL·E 3 or Stable Diffusion XL (SDXL) often stumble…

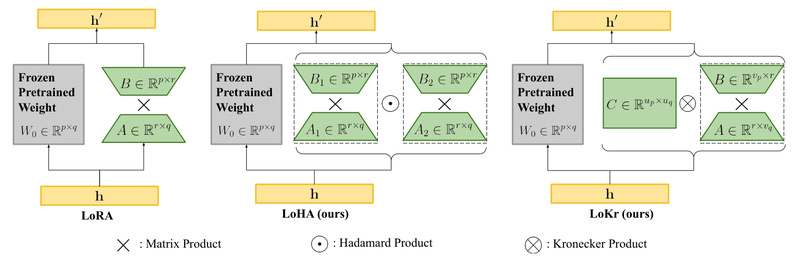

LyCORIS: Customize Stable Diffusion Without Retraining the Whole Model – Flexible, Lightweight Fine-Tuning for Text-to-Image Generation 2413

If you’re working with text-to-image models like Stable Diffusion, you’ve likely faced the trade-off between customization and efficiency. Full fine-tuning…