Image-to-image translation is a powerful capability in computer vision—but real-world applications often face two stubborn roadblocks: the absence of aligned (paired) training images and the need for multiple plausible outputs from a single input. DRIT (Diverse Image-to-Image Translation via Disentangled Representations) directly tackles both challenges. Developed by researchers at UC Merced and published at ECCV 2018 (with an extended journal version as DRIT++ in IJCV 2020), this method enables practitioners to generate varied, high-quality translations between visual domains using only unpaired datasets.

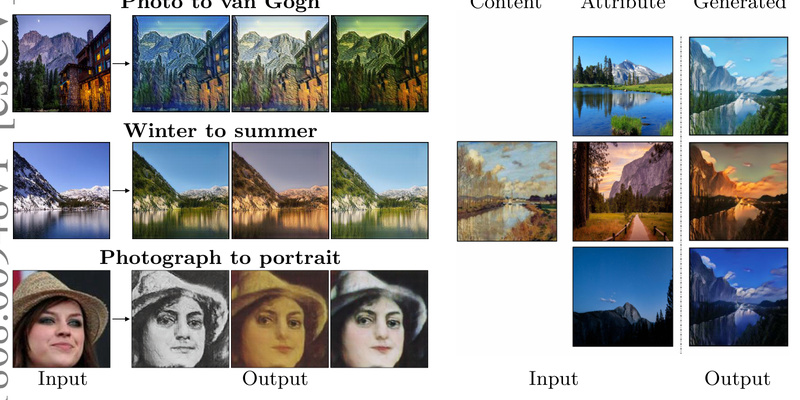

What makes DRIT particularly valuable is its use of disentangled representations: it separates an image’s domain-invariant content (e.g., structure, pose, layout) from its domain-specific attributes (e.g., color palette, texture, artistic style). At test time, you can combine a fixed content encoding with randomly sampled or user-specified attribute vectors to produce diverse, realistic outputs—no pixel-to-pixel correspondences required during training.

For engineers, researchers, and product teams working under data constraints, DRIT offers a practical path to creative, flexible image synthesis without the costly and often impossible task of collecting aligned image pairs.

Key Strengths That Solve Real Problems

1. Diverse Outputs from a Single Input

Unlike deterministic models (e.g., early CycleGAN variants), DRIT explicitly supports stochastic generation. Feed it one summer landscape photo, and it can output dozens of distinct—but equally plausible—winter versions, each with different snow coverage, lighting, or atmospheric effects. This is crucial for applications like content creation, data augmentation, or interactive design tools where variety matters.

2. Works Entirely with Unpaired Data

DRIT assumes only that you have two sets of images from different domains (e.g., photos and paintings), with no need for matching examples. This dramatically lowers the barrier to real-world deployment, especially in domains where alignment is infeasible—such as translating medical scans across modalities or converting product photos into artistic renditions.

3. Disentangled Representations Enable Control

By design, DRIT’s architecture isolates content and attribute spaces. This separation isn’t just theoretical—it gives users practical control at inference:

- Random sampling: Draw attribute vectors from a learned distribution to explore diverse styles.

- Attribute transfer: Encode attributes from a reference image (e.g., a Van Gogh painting) and apply them to a new photo, preserving its structure while adopting the target style.

4. Practical Enhancements for Stability and Quality

The DRIT++ extension introduces mode-seeking regularization (enabled by default) to discourage the generator from collapsing to a single output, thereby preserving diversity. It also supports multi-scale discriminators and feature-wise transformation (instead of simple concatenation) for tasks involving significant shape changes—like cat-to-dog translation—leading to more coherent and varied results.

Ideal Use Cases for Practitioners

DRIT excels in scenarios where both diversity and realism are required, but ground-truth paired data is unavailable:

- Artistic style transfer: Convert photographs into diverse painterly renditions (e.g., portrait → impressionist, cubist, or watercolor styles) using unpaired datasets from WikiArt and CelebA.

- Seasonal or weather translation: Generate multiple winter scenes from a single summer photo, useful for autonomous driving simulation or tourism apps.

- Cross-species or cross-category synthesis: Transform a cat image into various dog breeds—valuable for data augmentation in animal recognition systems.

- Domain adaptation: Fine-tune models on synthetic-to-real translations (e.g., MNIST → MNIST-M) without requiring pixel-aligned examples, improving robustness in downstream tasks.

In all these cases, DRIT sidesteps the need for labor-intensive annotation or alignment while delivering outputs that are both visually convincing and meaningfully varied.

How to Get Started with DRIT in Practice

Getting DRIT up and running is straightforward for teams familiar with PyTorch:

- Install dependencies: Python 3.5+, PyTorch 0.4.0, TensorboardX, and TensorFlow (for TensorBoard logging). A Dockerfile is provided for reproducible environments.

- Prepare data: Organize your unpaired datasets into standard folders (

trainA,trainB,testA,testB). No additional preprocessing is needed—DRIT handles variable input sizes thanks to adaptive pooling. - Train:

- For tasks with minimal structural change (e.g., summer ↔ winter), use default concatenation:

python3 train.py --dataroot ../datasets/yosemite --name yosemite

- For tasks with significant shape variation (e.g., photo ↔ painting), enable feature-wise transformation:

python3 train.py --dataroot ../datasets/portrait --name portrait --concat 0

- For tasks with minimal structural change (e.g., summer ↔ winter), use default concatenation:

- Generate results:

- Random diversity: Sample attributes randomly to produce varied outputs:

python3 test.py --dataroot ../datasets/yosemite --name yosemite_random --resume ../models/example.pth

- Attribute transfer: Use attributes from reference images:

python3 test_transfer.py --dataroot ../datasets/yosemite --name yosemite_encoded --resume ../models/example.pth

- Random diversity: Sample attributes randomly to produce varied outputs:

Training time can be reduced by setting --d_iter 1, and visualization overhead minimized with --no_img_display if only monitoring losses.

Practical Limitations and Considerations

While DRIT is powerful, it’s not a one-size-fits-all solution:

- Compute intensity: Training requires substantial GPU memory, especially with multi-scale discriminators.

- Hyperparameter sensitivity: The choice between

--concat 0(feature-wise transform) and default concatenation significantly impacts output quality and diversity—select based on whether your task involves shape deformation. - No pre-trained models included by default: The repository provides scripts to download models, but availability may vary; training from scratch may be necessary for novel domains.

- Domain-level assumption: DRIT assumes that the two domains share high-level semantic content (e.g., landscapes, faces). It may struggle with very dissimilar domains (e.g., X-rays to satellite images) without careful tuning or architectural adaptation.

Summary

DRIT stands out as a flexible, well-engineered solution for diverse image-to-image translation in data-limited settings. By leveraging disentangled representations and unpaired training, it delivers both creative freedom and technical robustness—making it an excellent choice for research prototyping, visual content generation, or domain adaptation pipelines where paired data is impractical. If your project demands multiple realistic outputs from a single input and you’re working with unpaired datasets, DRIT offers a compelling, production-ready pathway forward.