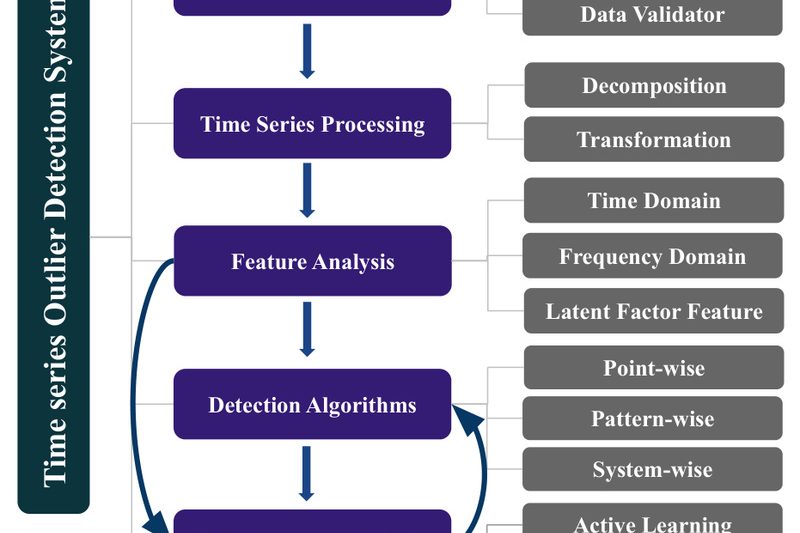

In modern data-driven operations—whether monitoring industrial sensors, analyzing financial transactions, or securing IT infrastructure—unexpected anomalies can signal critical failures, fraud,…

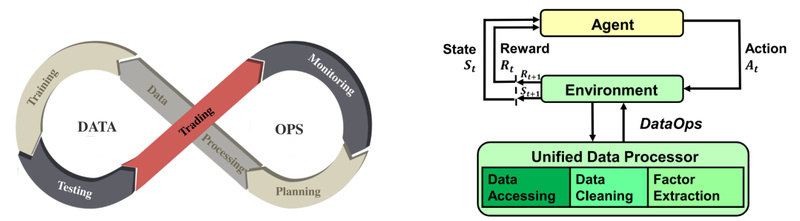

FinRL-Meta: Build Realistic Financial Reinforcement Learning Agents Without the Data Headache 1748

Financial markets are among the most complex and noisy environments for deploying reinforcement learning (RL) agents. Unlike simulated games or…

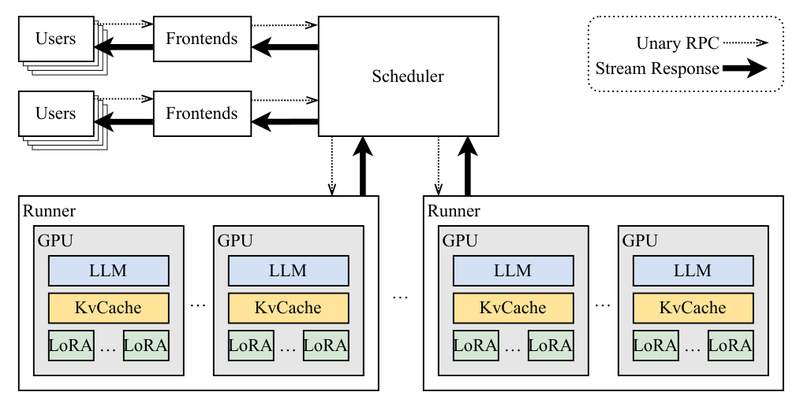

Punica: Serve Dozens of LoRA Models on One GPU—12x Faster, 2ms Overhead 1128

Deploying multiple fine-tuned large language models (LLMs) used to mean multiplying your GPU costs—until Punica arrived. If you’re managing dozens…

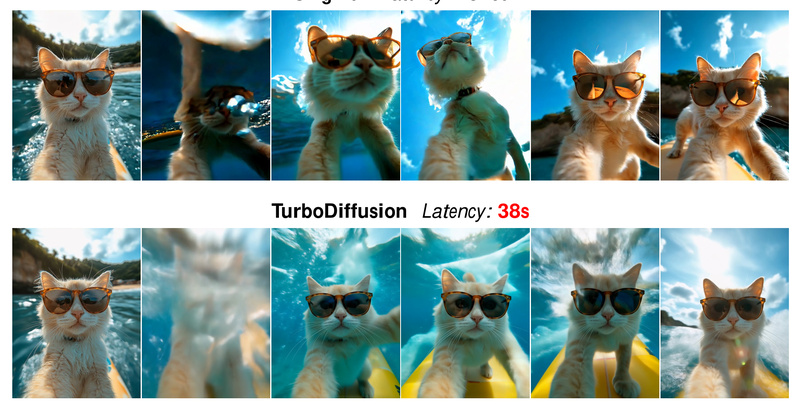

TurboDiffusion: Generate High-Quality AI Videos in Seconds Instead of Minutes on a Single GPU 1449

Video generation using diffusion models has long suffered from a crippling bottleneck: speed. Even the most advanced models can take…

Emu3.5: A Native Multimodal World Model for Unified Vision-Language Generation and Reasoning 1372

Imagine a single AI model that doesn’t just “see” or “read”—but seamlessly blends images and text in both input and…

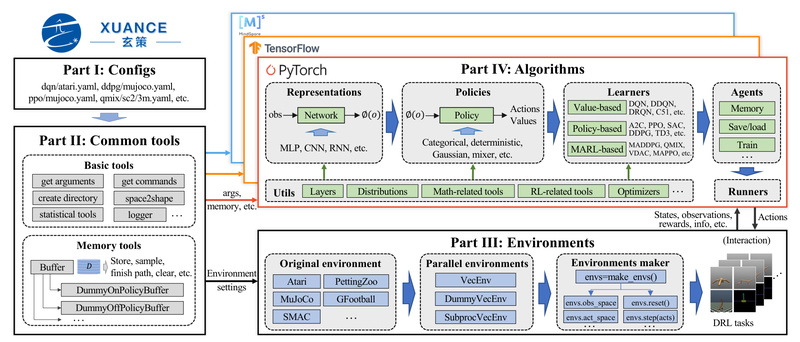

XuanCe: A Unified Deep Reinforcement Learning Library for Reliable, Cross-Framework AI Development 1008

Deep reinforcement learning (DRL) holds immense promise—from robotic control and autonomous systems to multi-agent coordination and game AI. Yet for…

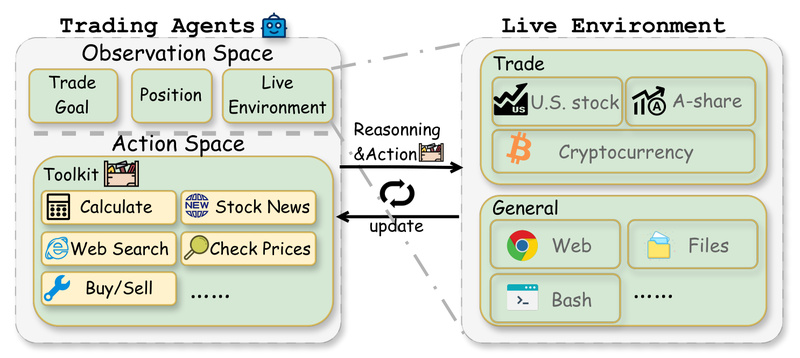

AI-Trader: Benchmark Autonomous LLM Agents in Real Financial Markets with Zero Human Intervention 10216

Evaluating whether large language models (LLMs) can truly function as autonomous decision-makers in dynamic, real-world environments remains a fundamental challenge…

OmDet: Real-Time Open-Vocabulary Object Detection with Transformer Speed and Zero-Shot Accuracy 1360

OmDet is a breakthrough in open-vocabulary object detection (OVD)—a vision-language paradigm that enables models to recognize not just pre-defined object…

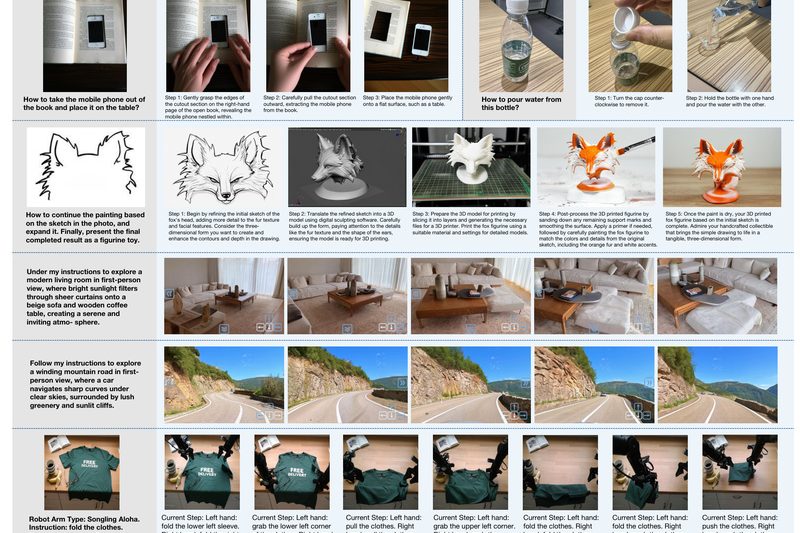

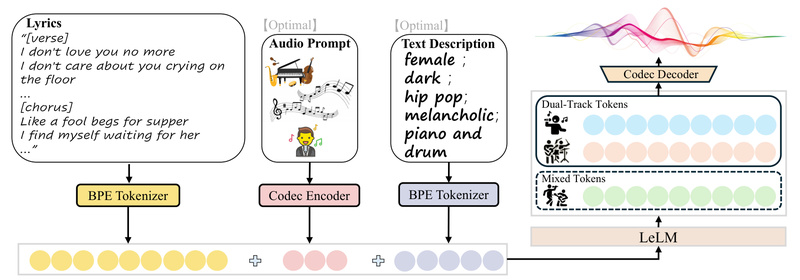

LeVo: Generate Full-Length, High-Fidelity Songs with Perfect Vocal-Instrument Harmony—Even on Consumer GPUs 1005

LeVo is a breakthrough in open-source AI music generation. Unlike many existing tools that produce fragmented, low-quality, or inconsistent audio,…

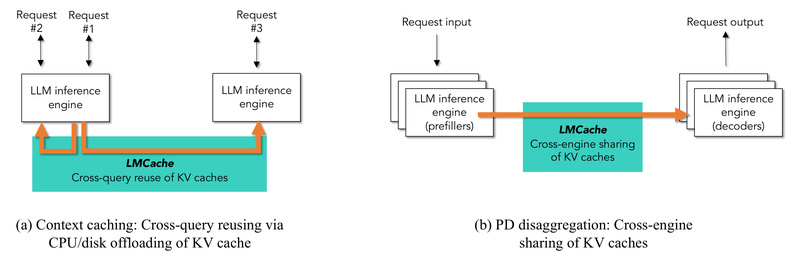

LMCache: Slash LLM Inference Latency and Multiply Throughput with Enterprise-Grade KV Cache Reuse 6375

Deploying large language models (LLMs) at scale introduces a familiar bottleneck: the growing size of Key-Value (KV) caches rapidly outpaces…