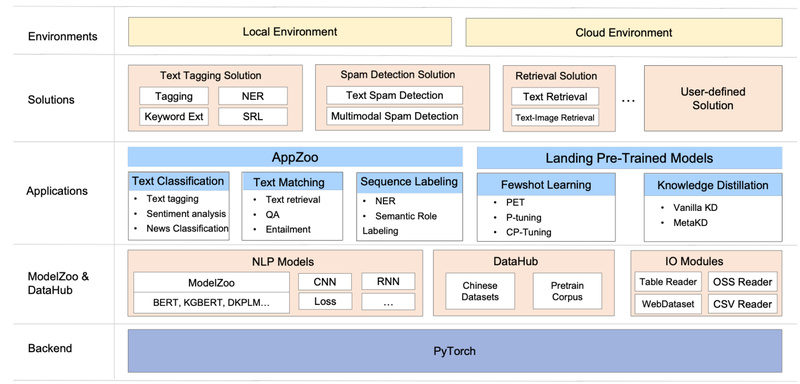

Natural Language Processing (NLP) has been revolutionized by pre-trained language models (PLMs), but turning these powerful models into real-world applications…

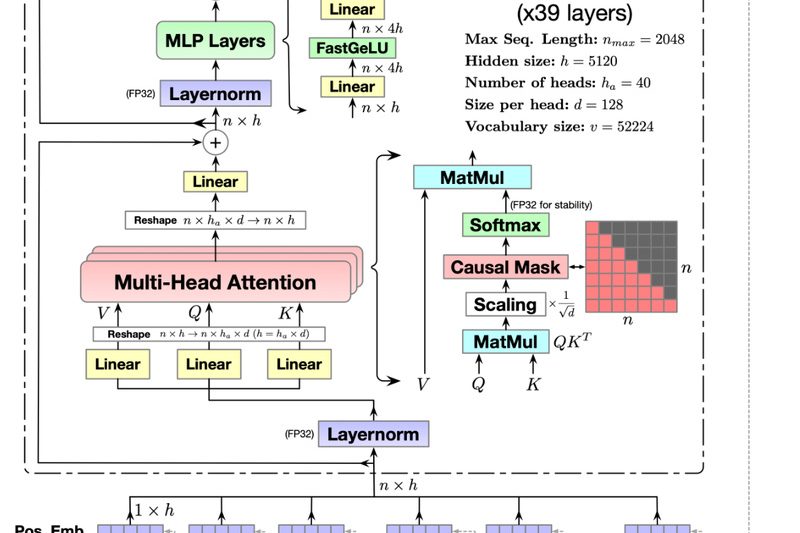

CodeGeeX: Open-Source Multilingual Code Generation That Boosts Developer Productivity Across 23 Languages 8713

For software teams working across multiple programming languages—or developers tired of vendor lock-in with proprietary AI coding tools—CodeGeeX offers a…

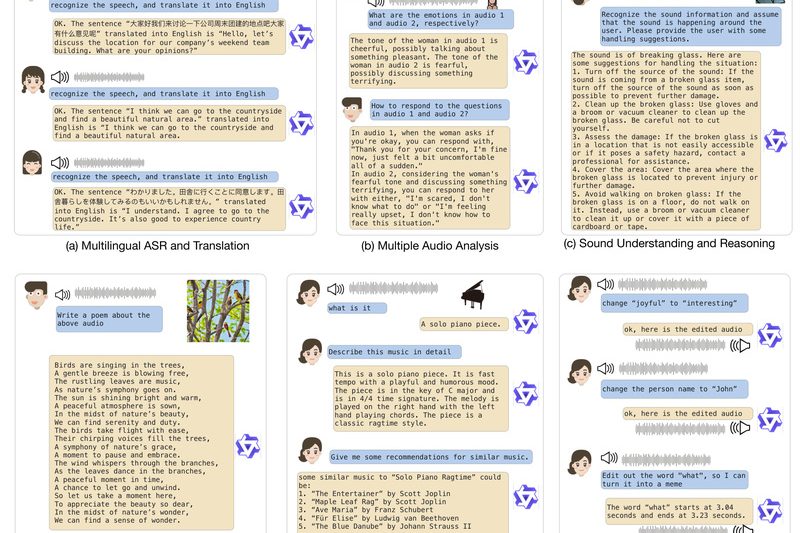

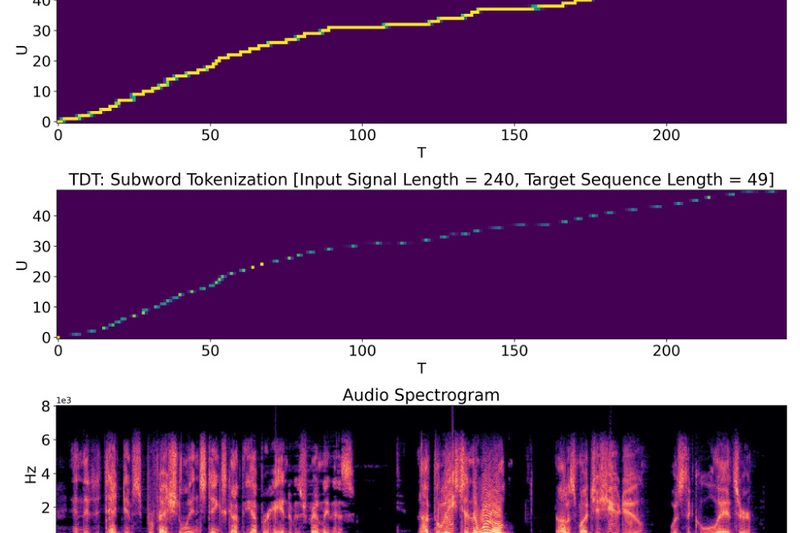

Qwen-Audio: Unified Audio-Language Understanding for Speech, Music, and Environmental Sounds Without Task-Specific Tuning 1848

Audio is one of the richest yet most fragmented modalities in artificial intelligence. Traditional systems often require separate models for…

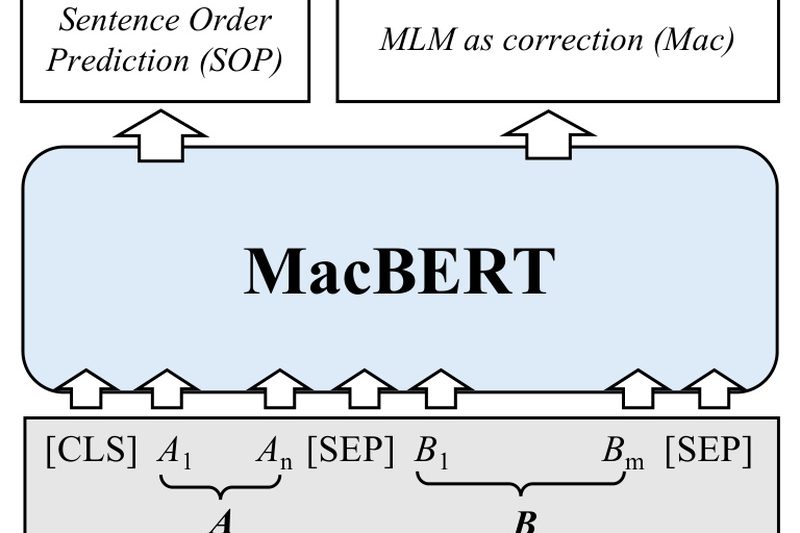

Chinese-BERT-wwm: High-Performance Chinese Language Understanding with Whole Word Masking for Better Word-Level Context 10147

Chinese-BERT-wwm is a family of pre-trained language models specifically engineered to overcome a key limitation of the original BERT when…

Chinese CLIP: Enable Zero-Shot Chinese Vision-Language AI Without Custom Training 5695

Multimodal AI models like OpenAI’s CLIP have transformed how developers build systems that understand both images and text. But there’s…

XLNet: Bidirectional Language Understanding Without Masked Input Limitations 6180

XLNet is a breakthrough in language modeling that effectively bridges the gap between autoregressive (AR) and autoencoding (AE) pretraining paradigms.…

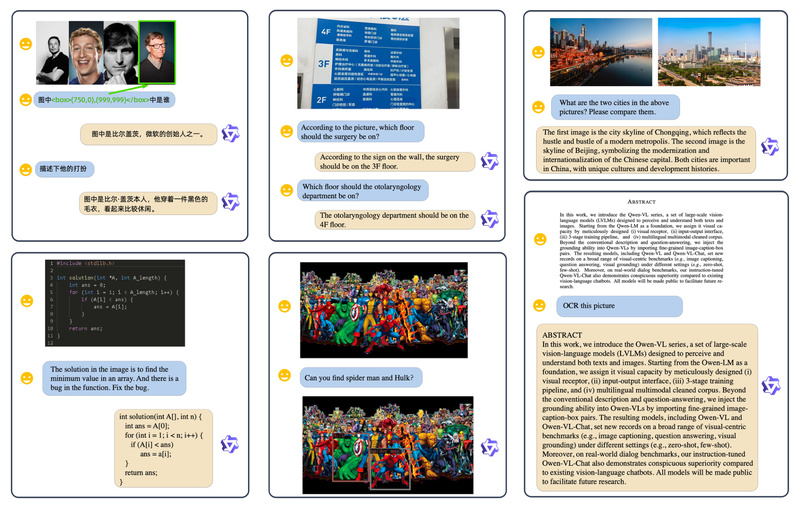

Qwen-VL: Open-Source Vision-Language AI for Text Reading, Object Grounding, and Multimodal Reasoning 6422

In the rapidly evolving landscape of multimodal artificial intelligence, developers and technical decision-makers need models that go beyond basic image…

NeMo: Build Production-Grade Speech, LLM, and Multimodal AI Faster with NVIDIA’s Optimized Framework 16305

NVIDIA NeMo is a cloud-native, open-source framework designed for developers, research engineers, and technical decision-makers who need to build, customize,…

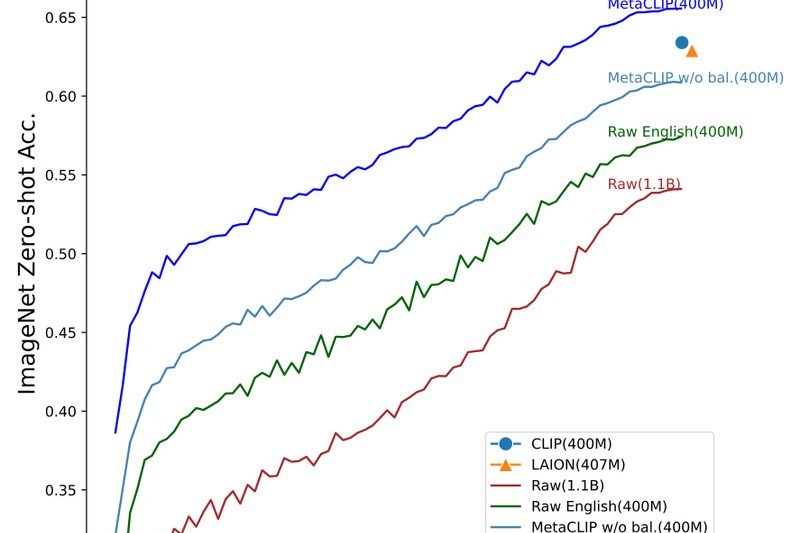

MetaCLIP: Superior Vision-Language Models Through Transparent, High-Quality Data Curation 1692

If you’ve worked with OpenAI’s CLIP, you know its power—but also its opacity. CLIP revolutionized zero-shot vision-language understanding, yet it…

SPIN: Boost Your LLM’s Performance Without New Human Annotations—Just Use Self-Play Fine-Tuning 1206

Imagine you’ve fine-tuned a language model using a standard Supervised Fine-Tuning (SFT) dataset—like Zephyr-7B on UltraChat—but you don’t have access…