Generative AI has made remarkable strides in image synthesis, yet many tools force users to choose between style-driven and subject-driven generation as separate, often conflicting tasks. Style transfer models capture artistic essence but lose identity fidelity; subject customization models preserve who or what appears but struggle to adopt new visual styles. USO (Unified Style and Subject-Driven Generation) changes this paradigm by unifying both capabilities in a single, coherent framework—enabling users to stylize specific subjects without sacrificing identity or artistic coherence.

Developed by ByteDance’s Intelligent Creation Lab, USO leverages disentangled representation learning and style-aware reward optimization to jointly manage content (subject identity) and style (visual appearance). The result? High-fidelity images where a person, object, or scene retains its core identity while fully embracing a new artistic style—even from multiple reference images.

Why USO Solves a Real Pain Point

Traditional pipelines often require stitching together separate tools: one for identity preservation (e.g., DreamBooth, LoRA), another for style transfer (e.g., AdaIN, Neural Style Transfer). This leads to inconsistent outputs, complex workflows, and degraded quality when both goals are demanded simultaneously.

USO eliminates this fragmentation. Whether you’re a designer applying a brand’s visual language to diverse product photos, a creator generating personalized storybook illustrations, or a researcher exploring content-style decomposition, USO delivers coherent, controllable, and high-resolution (1024×1024) results in a single inference step.

Core Capabilities That Make USO Stand Out

Simultaneous Subject Consistency and Style Similarity

Unlike prior models that optimize for one objective at the expense of the other, USO is explicitly trained on triplets: a content image, a style image, and the resulting stylized output. Through dual training objectives—style alignment and content-style disentanglement—the model learns to isolate identity features from stylistic ones, then recompose them faithfully.

Flexible Input Modes for Diverse Creative Needs

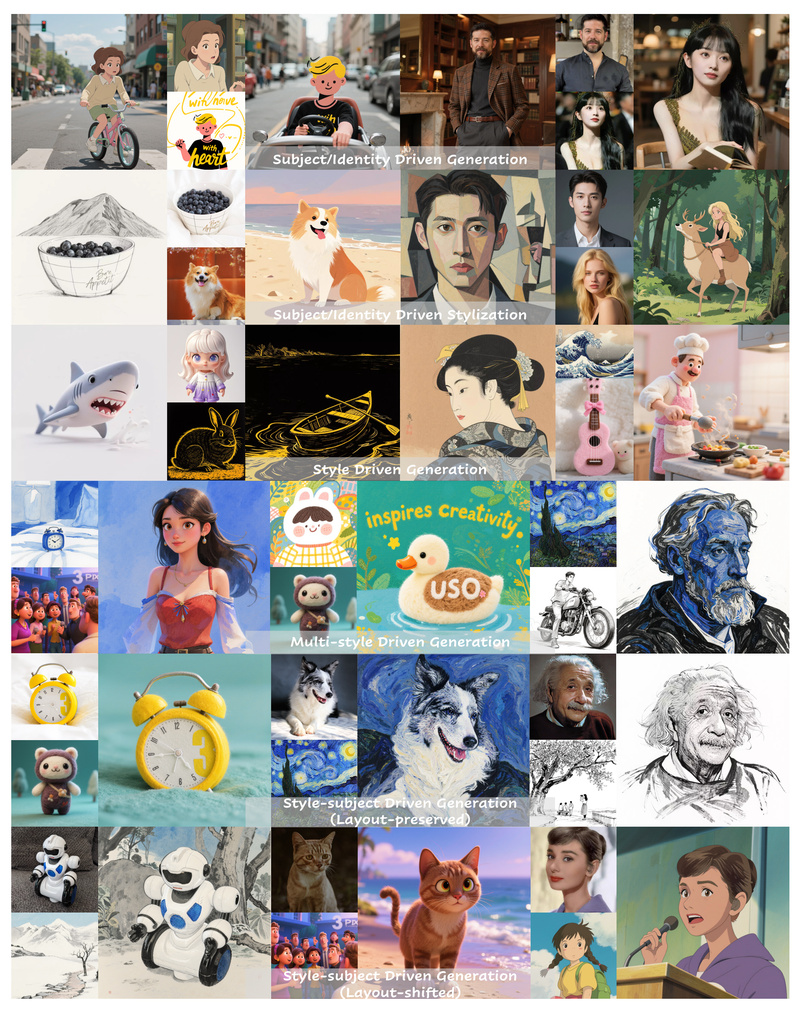

USO supports four key generation modes out of the box:

- Subject-only generation: Provide a reference image of a person or object and describe a new scene. USO places that subject naturally into the prompt-specified context while preserving identity (e.g., skin texture, facial structure).

- Style-only generation: Upload one or two style references and describe any scene. USO renders it in the target aesthetic (e.g., oil painting, anime, cyberpunk).

- Combined subject + style: Supply both a subject and a style reference. USO generates a new image of the subject in the style—ideal for character re-skinning, product redesign, or artistic reinterpretation.

- Layout-preserved stylization: Leave the prompt empty and provide a content image plus style(s). USO transfers the style while maintaining pose, composition, and spatial structure.

Native Integration with ComfyUI

For creators already using ComfyUI, USO is now natively supported as of version 0.3.57. Prebuilt workflows in the repository demonstrate how to combine USO with ControlNet, LoRA, or other nodes—enabling seamless integration into existing generative pipelines without code changes.

Optimized for Consumer Hardware

With the flux-dev-fp8 model variant and the --offload flag, USO runs on GPUs with as little as 16–18 GB VRAM, making high-quality unified generation accessible to creators without enterprise-grade hardware.

Practical Use Cases

- Personalized Content Creation: Generate images of a specific person (e.g., a client, a character) in varied artistic styles for books, ads, or social media—without retraining.

- Brand-Consistent Visual Design: Apply a company’s signature visual style (e.g., minimalist, retro, hand-drawn) to diverse product shots or marketing assets while ensuring subject clarity.

- Creative Prototyping: Rapidly explore “what if” scenarios—e.g., “What would this architect’s building look like in Van Gogh’s brushstrokes?”—with one model instead of multiple tools.

- Layout-Aware Stylization: Redesign UI mockups, fashion sketches, or architectural plans by transferring styles while preserving structural integrity.

Getting Started Is Straightforward

Installation requires Python 3.10–3.12 and PyTorch 2.4. After setting up a Hugging Face token and downloading weights via the provided script, you can run inference with intuitive command-line arguments:

# Subject-driven example python inference.py --prompt "A woman giving a speech on stage." --image_paths "identity.jpg" --width 1024 --height 1024 # Style + subject example python inference.py --prompt "A dog wearing sunglasses riding a skateboard." --image_paths "dog.jpg" "cartoon_style.webp" --width 1024 --height 1024 # Low-VRAM mode python inference.py --prompt "..." --image_paths "img.jpg" --offload --model_type flux-dev-fp8

The project includes clear guidance on prompt engineering: use natural language for layout-shifted generation (“A man painting in a studio”) versus instructive phrasing for layout-preserved stylization (“Transform this photo into watercolor style”).

Limitations and Considerations

- Model weights require a Hugging Face token for download.

- Even in FP8 mode, ~16 GB VRAM is needed—still out of reach for some consumer GPUs.

- As of now, training code and the full triplet dataset are not public, limiting reproducibility for full pipeline research.

- The project is released under Apache 2.0 for research and responsible use, with a clear disclaimer against misuse.

Summary

USO bridges a critical gap in generative AI by unifying subject identity and artistic style in a single, practical model. It replaces fragmented workflows with a coherent, high-performance solution that respects both who or what is in the image and how it should look. With native ComfyUI support, consumer-GPU optimization, and flexible input modes, USO is poised to become a go-to tool for creators, designers, and researchers who demand both fidelity and creativity—without compromise.