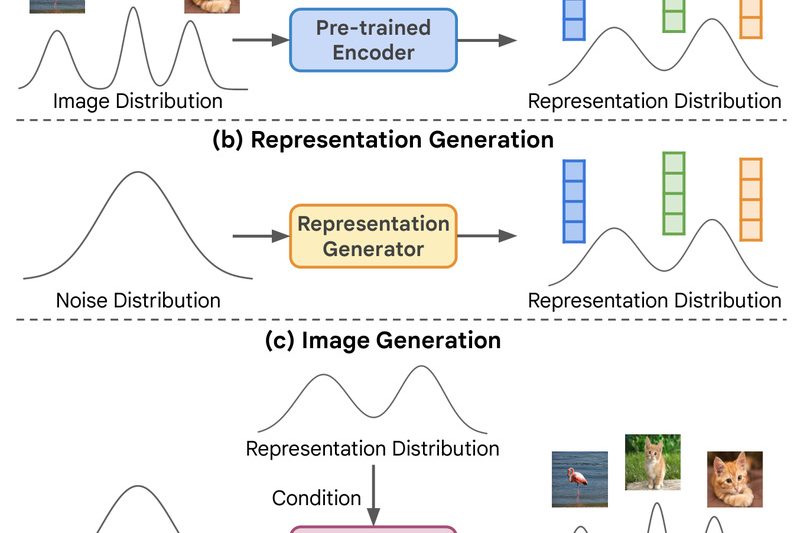

For years, unconditional image generation—creating realistic images without relying on human-provided class labels—has lagged significantly behind its class-conditional counterpart in…

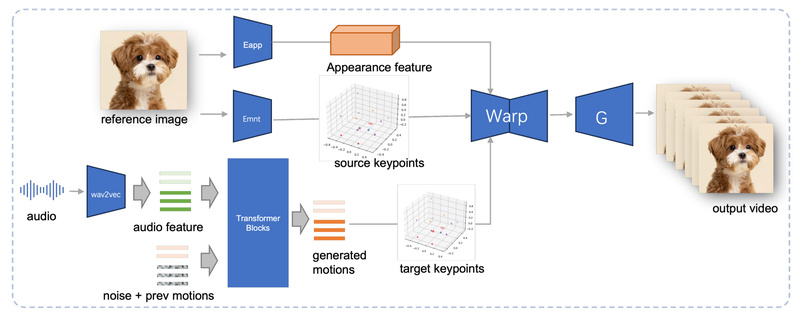

JoyVASA: Animate Human and Animal Portraits from Audio with Diffusion-Based Lip Sync and Head Motion 840

Audio-driven facial animation has long been a challenging yet highly valuable capability—from building expressive virtual agents to creating personalized pet…

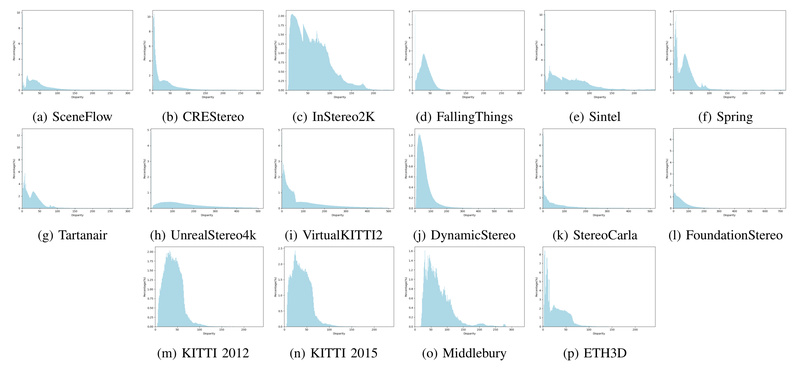

Stereo Anything: Zero-Shot Stereo Matching That Works Across Any Domain Without Retraining 803

Stereo matching—the task of finding corresponding pixels between left and right images to infer depth—is foundational to 3D vision systems…

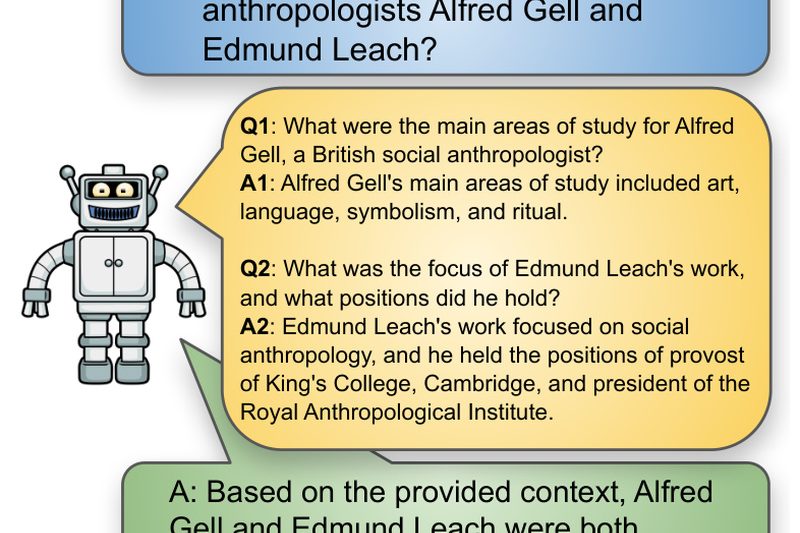

SQuARE: Boost LLM Reasoning with Self-Generated Questions—No Retraining Needed 757

Solving complex reasoning problems is a persistent challenge for Large Language Models (LLMs). While techniques like Chain-of-Thought (CoT) prompting have…

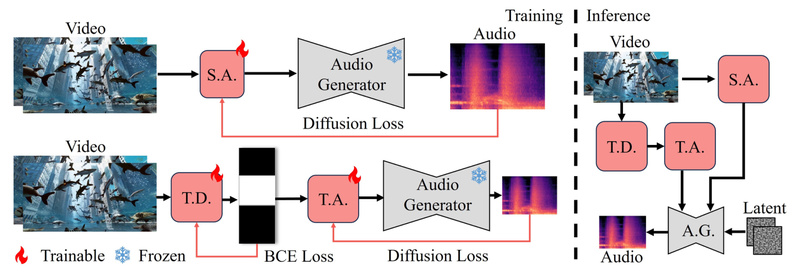

FoleyCrafter: Generate Lifelike, Synchronized Sound Effects for Silent Videos—Automatically 630

Silent videos—whether from AI-generated content, archival footage, gameplay recordings, or unfinished film prototypes—often lack the immersive quality that sound brings.…

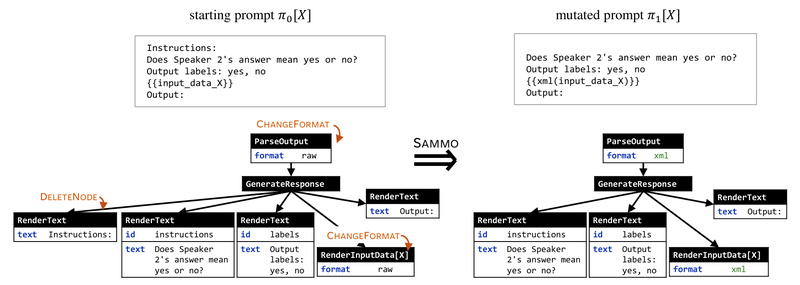

SAMMO: Optimize LLM Prompt Programs Like Code—Structure-Aware, Compile-Time Tuning for RAG, Instruction Refinement, and Prompt Compression 731

Modern LLM applications increasingly rely on complex, structured prompts—especially in scenarios like Retrieval-Augmented Generation (RAG), instruction-based tasks, and data labeling…

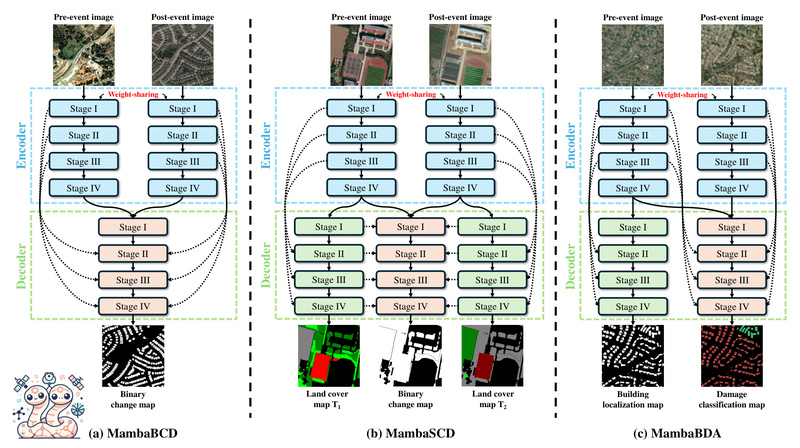

ChangeMamba: High-Accuracy, Low-Cost Change Detection for Remote Sensing Without CNN or Transformer Trade-Offs 515

Change detection in remote sensing—identifying what has changed between two satellite or aerial images taken at different times—is a critical…

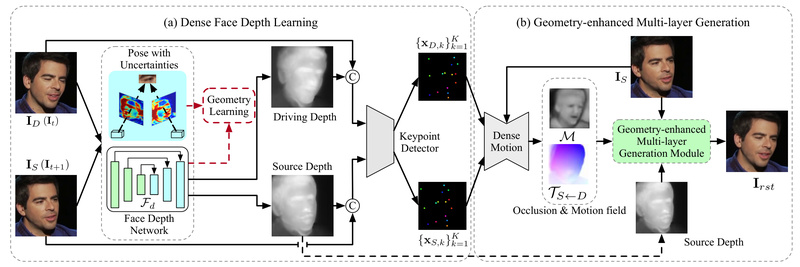

DaGAN++: Generate Realistic Talking Head Videos with Depth-Aware AI—No 3D Labels Needed 995

Creating lifelike talking head videos—where a static portrait speaks and moves in sync with an audio or driving video—has long…

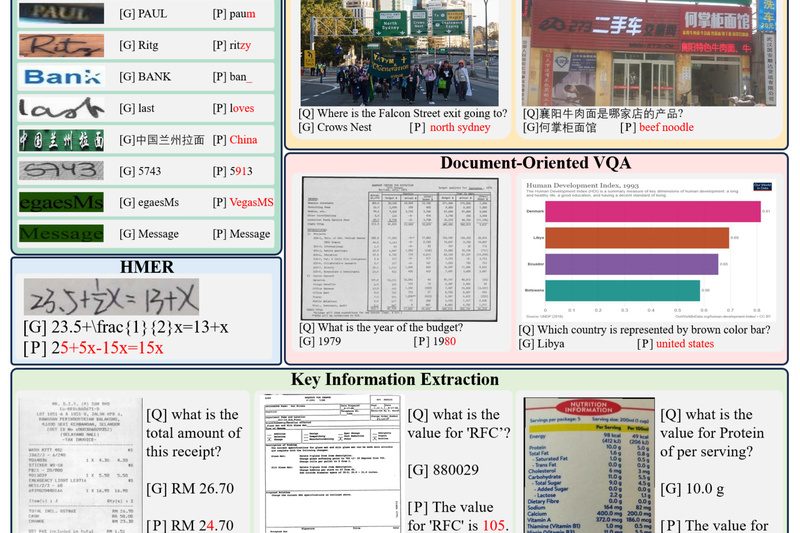

OCRBench: The Definitive Benchmark for Evaluating Real-World OCR Capabilities in Large Multimodal Models 726

Large Multimodal Models (LMMs) like GPT-4V and Gemini promise powerful vision-language understanding—but how well do they actually read text in…

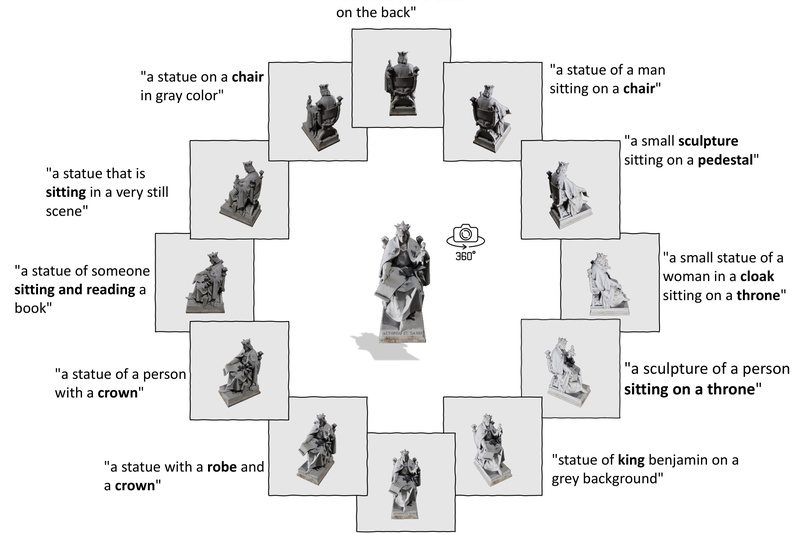

ULIP-2: Scalable Multimodal 3D Understanding Without Manual Annotations 547

Imagine building a system that can understand 3D objects as intuitively as humans do—recognizing a chair from its point cloud,…